March 21st, 2026

Added Auto strength node

Instead of applying one flat strength across the whole network and guessing if it's too much or too little, it reads what's actually in the file and adjusts each layer individually. The result is output that sits closer to what the LoRA was trained on, better feature retention without the blown-out or washed-out look you get from just cranking or dialing back global strength.

One knob. Set your overall strength, everything else is handled.

The manual sliders are optional choice for if you don't want to use the auto strength node! but I 100% recommend using the auto-strength node

March 10th, 2026 (Fixed refresh bug)

A bug was causing the new added Lora not to appear upon page refresh has been fixed.

Also for more of my work and other node packs you can see them at my GitHub Page

Here: https://github.com/capitan01R

and if you would like to support my work you can buy me a coffee at this link :)

March 7th,2026 :

There was a bug with the Lora stack node when refreshing the browser, but now it's fixed!

Been using Z-Image Turbo and my LoRAs were working but something always felt off. Dug into it and turns out the issue is architectural, Z-Image Turbo uses fused QKV attention instead of separate to_q/to_k/to_v like most other models. So when you load a LoRA trained with the standard diffusers format, the default loader just can't find matching keys and quietly skips them. Same deal with the output projection (to_out.0 vs just out).

Basically your attention weights get thrown away and you're left with partial patches, which explains why things feel off but not completely broken.

So I made a node that handles the conversion automatically. It detects if the LoRA has separate Q/K/V, fuses them into the format Z-Image actually expects, and builds the correct key map using ComfyUI's own z_image_to_diffusers utility. Drop-in replacement, just swap the node.

Repo: https://github.com/capitan01R/Comfyui-ZiT-Lora-loader

If your LoRA results on Z-Image Turbo have felt a bit off this is probably why.

Description

v1.2.0

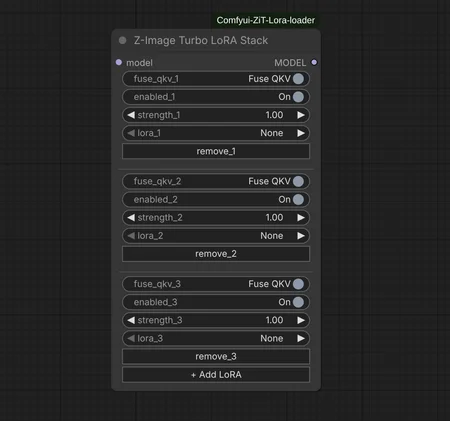

added Multiple Lora Load and remove