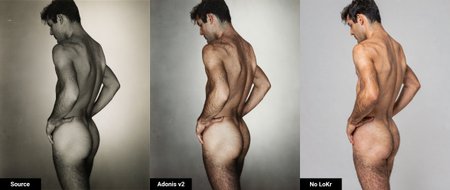

Adonis for Flux 2 Klein 9B

Adonis is an "upscale model" LoKr trained using a high-resolution "target" dataset of men, paired with synthetic low-resolution edited copies as the "control." It refines skin, hair, and anatomy details that base model often gets wrong.

How it Works

Edit-Only: Improves only what is already visible in the input image. Suitable for any image involving people.

Two-Model Generation: The model splits into two models (

adonis_baseandadonis_refine) that work best together:Adonis Base: Sets the image structure and color first. (first 4-6 steps)

Adonis Refine: Brings out details and corrects issues from the initial steps. (final steps, 9 steps total)

Recommended Prompt

This prompt enhances most images effectively:

uhdmanscale, fully reconstruct this entire image from cellphone quality to professional high resolution color raw quality. Remove halftone dot pattern. Apply descreen filter. Eliminate periodic grid noise. Eliminate repeating noise patterns and artifacts, remove uniform diagonal line texture patterns. Reconstruct low resolution high ISO noise areas with high resolution low ISO noise textures.

Apply full detail reconstruction to all areas: background, environment, surfaces, objects, clothing, and foreground elements — render everything sharp, textured, and high fidelity.

Subject identity is locked: preserve exact facial geometry and body geometry, eye shape and color, nose and mouth shape, and expression. On skin areas, remove color blotch artifacts, normalize tone uniformity, preserve natural pore and texture detail. On hair and body hair areas, separate smeared color artifacts, restore strand separation and texture. Outside the subject's face, freely reconstruct all texture and sharpness with no restrictions.

Deblur and focus correction pass. Infer and reconstruct underlying detail from soft source: sharpen edge definition, recover eye detail, lip definition, and skin texture from motion blur. Output as professional high resolution color camera RAW image.

Note: For non-color inputs, specify object colors in the background. Replace "color" with "black and white" if you do not want to colorize the image.

ComfyUI Setup

Install these custom nodes:

Model Files & Requirements

Base Models:

FLUX.2-klein-9B.safetensors (HuggingFace: black-forest-labs/FLUX.2-klein-9B)

Flux 2 VAE (HuggingFace: Comfy-Org/flux2-dev)

Clip:

Use a higher quantization GGUF if VRAM is limited. FP8 tends to degrade details in eyes and fingers based on testing.

Description

Adonis F2K v2

Adonis v2 was trained on 100 paired low resolution/high resolution images of men, using a custom python script to degrade the high resolution camera RAW images in ways that match commonly found low resolution sources (low-light/noisy/blurry images, pixelated web images, b&w vintage magazines, vintage images/media, etc.)

The model excels at capturing fine hair and skin detail that is normally destroyed during the base model/upscale process, and understands the correct color and anatomy of various solo/group situations. The cleaner the image, the better the result.

v2 of Adonis is a LoKr model instead of a LoRA model.

Using a LoKr with a low scale of 4, and training images at 1024, 1280 and 1536 resolution, the model was able to learn micro skin detail and become more generalized with minimal "grid" artifacting like in v1.

For extremely low resolution images with prominent checkerboard artifacts, you may need to add a Gaussian blur or try a 1xDeJPG model to remove as much of that pattern as possible to get a better result.

While trained on men, both v2 models should be capable of enhancing women as well (*you may need to use an additional model for female anatomy since that is not in the current dataset)

adonis_f2k_v2_main:

* Great quality, somewhat saturated.

* Used for most images, is from a lower training step that causes little if any morphing/shifting

(precise but not always accurate with all colors/textures)

adonis_f2k_v2_alt:

* Great quality, less saturated and better texture.

* Used when the texture/detail quality is more important than shifting

(more accurate with color and texture, will shift image slightly but identity stays intact)

The settings in the workflow provide the best results (from my testing) but you may find better results through trial and error depending on your source image.

The custom nodes used are Res4Lyf, rgthree, vrgamedevgirl and kjnodes. There is an optional scaling node that can be replaced with scale to total pixels node.

https://github.com/ClownsharkBatwing/RES4LYF

https://github.com/rgthree/rgthree-comfy

https://github.com/vrgamegirl19/comfyui-vrgamedevgirl

https://github.com/kijai/ComfyUI-KJNodes

(Optional) https://github.com/BigStationW/ComfyUi-Scale-Image-to-Total-Pixels-Advanced

The best caption is the following (remove non-applicable commands for your image):

uhdmanscale, fully reconstruct this entire image from cellphone quality to professional high resolution color camera raw quality.

Remove halftone dot pattern. Apply descreen filter. Eliminate periodic grid noise.

Apply full detail reconstruction to all areas: background, environment, surfaces, objects, clothing, and foreground elements — render everything sharp, textured, and high fidelity.

Subject identity is locked: preserve exact facial geometry and body geometry, eye shape and color, nose and mouth shape, and expression.

On skin areas, remove color blotch artifacts, normalize tone uniformity, preserve natural pore and texture detail. On hair and body hair areas, separate smeared color artifacts, restore strand separation and texture. Outside the subject's face, freely reconstruct all texture and sharpness with no restrictions.

Deblur and focus correction pass. Infer and reconstruct underlying facial detail from soft source: sharpen edge definition, recover eye detail, lip definition, and skin texture from motion blur. Treat blur as an optical artifact to remove, not a stylistic choice.

Output as professional high resolution color camera RAW image.