If looking for Video to video with modern models

VACE wan 2.1 is what you are looking for.

https://civarchive.com/models/1604221/native-vace-video-to-video-with-ref

This wont be updated anymore sorry

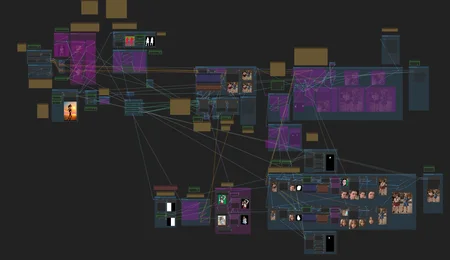

V5.1 (V6 Beta) - This was a lot of work, please ask questions and give any feedback in comments. A LOT has changed so i really want to make sure everything works.

- Redone from nearly scratch

Whats new

1. Model Loading

- Now 1 model default with second model optional if enabled.

- Changing of animate diff settings/model for upscale for either model used.

- Motion lora loading

- Removed all saving of controls inside this flow.

- Move resizing to beside prompting.

2. masking

- 3 main controls loaded from video or image folder or generated in the flow.

- 5 optional mask loaders

- Sam 2 single mask creation

- Mask subtracting/adding

- Optional upscale mask.

3. Backround Controls

- Inpaint Simplified

- Simplified 4 background options

1. Original Frames

2. Video import background

3. Image Load

4. Image Generation

- Image Load and generation both have a crop group to place subjects.

- Background Controls are strait forward for default copies of any of the chosen backgrounds.

4. Subject Controls

- Previews to show what masked controls see when you mask them

- Line art, depth, and openpose controls

- Outfit to outfit / admotion stabalizers.

- Control cutouts

5. Audio Replacement loading

6. Clearer path for upscale controls.

Controls 5.11 - i dont know what went wrong but it was saving to the wrong spot, I replaced the video text box with the same exact node and it started working again. I dont know if this was a "me" issue or the the node was updated and bugged out.

changed openpose to properly bypass instead of disable

Sam 5.1 - working on article for this flow now.

Simple sam 2 masking - both flows in one now.

There are so many damn ways to do this... i am giving you the 2 best i have found.

Features

- seperation of people or objects in a video/image

- tracks people or objects throughout videos

- mask faces, hands, clothing, skin, hair, or any object.

load from project folder or any location.

https://civarchive.com/articles/8035

https://civarchive.com/articles/8360/using-sam-2-masking

Controls 5.1 is the start of 6.0, still have work to do on the others so thought i would throw this up for now, its very streamlined for video/pose/depth/lineart saving with optional masking with sam1 or remove background

- Removed face mesh

- removed depth masking

- removed optional controls

- removed anything that was not needed anymore.

- Changed Interface to be more streamlined

- Kept sam1 and remove background masking options if needed

- video source, Depth, pose, lineart video saving

- optional depth and video source image saving

https://civarchive.com/articles/8036/video-to-video-project-setups

5.01

- added back downscale options for all controls (large vram savings when using HD controls)

- loading in all masks for ip adapter perhaps will add 1 more in next update.

- fixed a few minor bugs

Important note : All save will have a 00001 etc attached at the end, you can remove this or just use the names as is to load in the video flow.

I feel this should be made clear, V5 comes with many extra workflows. They are all meant to be used in the order of the folders.

confyui/output/projects is the main folder, you choose the video name inside this folder to save each video

Following flows

masksandcontrols

used to save the video/controlnets/masks to the proper folder to load in for the videos flow.

These will have 00001 etc attached to the names, you can rename them or use those default names when loading in the videos flow

sam 2 masking

used to load in the saved video from the masking flow and create sam 2 masks for it saving everything to the same project folder

Videos flow

This loads in the video and controls generated above to create a video.

This saves everything to a folder called base and a folder called upscaled.

upscale flow

This loads in any videos from the upscaled folder inside your video folder, these are the saves from the video flow.

This saves to a folder called upscale2 inside the same project/videoname folder

this allows you to load in a mask from the project folder

face fix flow

this loads from the upscale2 folder to process the faces

this saves to the folder called faceenhance

Live portrait flow

this loads from the faceenhance folder the video of your choice

this saves to the main video folder, i believe.

All of these have options to change where they are loading from, so you can skip a step or 2 if you want.

V5 has the ability to generate all controls in the flow. This was my attempt to integrate the masking flow with the videos flow. It worked, but it is HIGHLY recommended to run the masksandcontrols.json and generate the controls needed for your video.

It takes less than 5 minutes, and is easy to follow. This flow creates ALL folders and controls you need to run the videos flow and places them inside the project/videoname folder for you. You can load any video in this flow from anywhere on your pc to process.

Then you can load up sam 2 masking and load the video from the project folder the masking flow saved. After this it only loads from this folder.

If you skip this step things get more confusing when reloading masks and controls.

All examples use no upscale or face fixing, the girl with curly hair running had a face fix applied

Explanation of all node groups

https://civarchive.com/articles/7063

Enjoy everyone. :)

V5

- Reworked loading to work better with loaders

- Interface cleaned up

- moved prompting to beside base render

- changed the loading of models for easy turn off and on of settings

- removed pix2pix

The following is set up to run with the videos from the main video flow using project folder.

- including SAM 2 masking flow

- including masking/controlnet flow

- including upscale flow

- including face fix flow

- including Live Portrait flow

- added article with info on video gen workflow

- 2 example projects included

- looped spin

- running

Masking flow can now save images for frames and depth to help with compression artifacting

Im tired.

Beta 3 - I am separating v2 and v3 beta because there have been many changes to comfy, and bugs introduced that i dont know if i need to fix or will be fixed with comfy updates. V2 may work better?

- a lot of minor fixes, hopefully did not break other things.

- added sam masking to main the mask save area

- added depth mask saving to main depth gen area.

- added saving of custom sam masks generated / added mask loader to load in your custom saved masks

- Moved all controlnet Model loading to the start, ensure you have loaded your models properly.

- tested and fixed the dual mask prompting, i think it works properly.

- auto adding commas to all schedules to ensure they work properly

- tagger tags integrated into all prompts if enabled, schedules it adds to pre text.

- edited the placement background option... not sure if it was broke before...

- removed meshgraphormer hand refiner. IMO this is meant for text to video, not video to video. Hand masking can be done with sam masks and you can use depth/lineart with those custom masks.

- added the nodes needed to combine any of your masks together.

- added skip every override to all control loaders to allow you to load in controls you saved with skip every enabled.

- inlcuded project folder with a animation from examples. called "Stop"

I am sure there will be errors for the masking in some cases due to the downscale option, so many previews depend on it.

If you have an error with any combine masks disable the downscale masks option and it should work. I am not even sure downscale does anything i think it auto downscales when rendering.

Included is a project folder with a video to load as default.

The below issue has been fixed with updated comfy UI

https://github.com/comfyanonymous/ComfyUI/pull/4535

**********IMPORTANT**********

Bug in video loaders that has been reported and hoping for a fix

- when loading a video via string combines as this flow does the loaders needs to load things 2 times to cache things properly... I dont know why, it should take only 1

possible solutions

1. I could change all loaders to load videos directly but that would need you to set the video for every video control you load and break any simple text boxes that load videos. Its a workaround that i dont want to do TBH, as it makes more work for both me and you.

2. Keep it as is with knowledge you need to set up all controls and load them Twice before starting your render. This can be done by just disabling the first and second pass renders, and running the flow a few times until it just starts and stops immediatly waiting for changes. It works on first render, but if you enable upscale after doing base it will restart base if you have not loaded Twice. This is currently what i am doing while waiting for a response to my bug report.

In summary, Disable both first and second render and run the flow to load all controls (2 or 3) times until the flow does not reprocess anything when you press q. This prevents restarts when enabling things further down in the flow like the upscale.

Beta 2 -

fixed save location for pose and line art

fixed batching and re-batching for SAM custom masks

added a default project folder with a default video its 400+ frames original so limit the frames if you have a lower vram card to use the default. Sheesh Dance, example in previews

Put project folder inside comfy ui output folder to load default workflow

V5 beta

- reworked interface

- added simple masking creation and save

- added dual mask loading

- added depth, pose, lineart generation and save

- Reworked loading controls

- Reworked prompting

- Simple Prompting, Scheduled Prompting, Regional Prompting

- added more custom masking options

- using constants to prevent line spam

- updated all video combines

Fixes

- not using clipvison the wrong way

Removed

-Depth lineart

-Face Masking

-Face Mesh

-Face Controls

-Background scroll/pan

Looking to get feedback if this is better, worse or whatever.

Leave a comment if you think its worse for some reason, or a thumb up i you like it

Not sure if its better than v4 but its a lot cleaner.

Updated workflow to fix the spin1 example, it now has the needed files, sorry.

Starting to go through this to optimize and fix anything comment on anything you would like added or changed.

masking 4.1

- added Depth Anything v2

- added dense pose saving for testing

- added proper saving locations, now all control net files are saved to the projects folder inside the chosen video folder for easier sorting

note - all files still need to be renamed to remove the 00001 extensions.

- added proper switch to change the save directory name

V4Simplified - this version is cut down to only offer less confusing options with a clear path.

- I dont know many more things to make this easier. Some advanced things removed, and UI is now a walkthrough.

- Removed RGB masks \ multi prompting (use advanced version for that)

- Added Step groups to help guild new users.

- Added new notes

All 4 parts included in 1

Masking

Videos

Upscale

Extras

2 example projects

1 spin as usual

1 anime dance that was requested.

a few old projects to show examples, they are problably out of date but should show you settings i used.

If You tried this workflow before and thought it was too complicated, this version is for you. This took a while to put together.

some possible nodes needed

https://github.com/ltdrdata/ComfyUI-Manager

https://github.com/Kosinkadink/ComfyUI-VideoHelperSuite

https://github.com/crystian/ComfyUI-Crystools

https://github.com/mav-rik/facerestore_cf

https://github.com/ltdrdata/ComfyUI-Impact-Pack

https://github.com/WASasquatch/was-node-suite-comfyui

https://github.com/Nourepide/ComfyUI-Allor

https://github.com/Suzie1/ComfyUI_Comfyroll_CustomNodes

https://github.com/Kosinkadink/ComfyUI-Advanced-ControlNet

https://github.com/cubiq/ComfyUI_essentials

https://github.com/Fannovel16/ComfyUI-Frame-Interpolation

https://github.com/SLAPaper/ComfyUI-Image-Selector

https://github.com/FizzleDorf/ComfyUI_FizzNodes

https://github.com/LonicaMewinsky/ComfyUI-MakeFrame

Controlnet Models used

Think these2 are the same, or they produce very similar results.

(unknown name) adMotion - https://huggingface.co/crishhh/animatediff_controlnet/resolve/main/controlnet_checkpoint.ckpt?download=true

Animatediff Controlnet - https://civarchive.com/models/232617/animatediff-controlnet-models

Media Pipe Face Mesh - https://huggingface.co/CrucibleAI/ControlNetMediaPipeFace/tree/main

Outfit to Outfit - https://civarchive.com/models/191956/outfit-to-outfit-controlnet-outfit2outfit

pix2pix - https://civarchive.com/models/38784/controlnet-11-models

Description

FAQ

Comments (1)

pl. make a help video, can't understand workflow