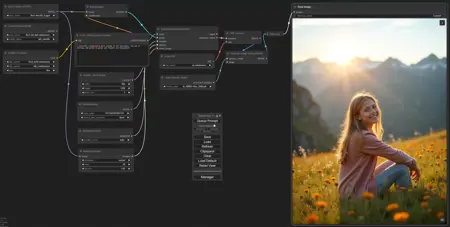

--- v2.0 has 3 LoRA spots, Save Image with Metadata, Optional Upscale and just More Neat than the quick share ---

Quick share for those who ask in comments, it's about this model (used Q8)

https://civarchive.com/models/647237?modelVersionId=724149

Can also be used with F16, better quality than Q8 but harder on your PC

https://civarchive.com/models/662958/flux1-dev-gguf-f16

GGUF models direct downloads here

https://huggingface.co/city96/FLUX.1-dev-gguf/tree/main

😂 the provided prompt is a parody on the outcome of the Google Search meaning of Lada 😂

If Q8 is on the edge of your VRAM, you can also use Q6_K which is smaller and almost the same quality!

Description

FAQ

Comments (16)

i m new in confy whtat is gguf??

I have no idea, just found it 30 minutes ago, but it works, put the file in your unet folder and go. You do need the custom node though, should add it.

It's a file format used for models, but the main thing is the gguf models are quantized to be just as small as the nf4 ones but much higher quality. Q8_0 will produce nearly identical results to the full FP16 model but will fit into 16GB VRAM, and Q4_0 is cheaper and better looking than fp8 while fitting into 8GB VRAM.

Better compression basically.

How much iteration/sec on 8gb VRAM ? 40s/it on 4060Ti/32 gb ram

I don't know, I do 5.5s/it on 12GB

@JayNL 40s/it comfyui

@Eepol that's a massive difference with a 4070 12GB

@JayNL in forge 2.9 it/s

@Eepol that sounds more logical with 16GB, guess your settings in Comfy were not ok

I'm also using 8GB VRAM, so we shouldn't ask how many iterations/ second; we should ask how many seconds/per iteration.

is forge than comfyui faster on flux ?

I'm on mac. Theoretically, it should be faster but i'm not seeing any speed difference even with the q4. What am i doing wrong?

I experienced that as well - I am on a 16GB 4060Ti (So comparing Q4_0 to fp8 dev gives roughly the same performance)

@curzon739 even F16 is kinda the same speed as Q8, it's more how much VRAM they use which results in a quality difference, not difference in speed.