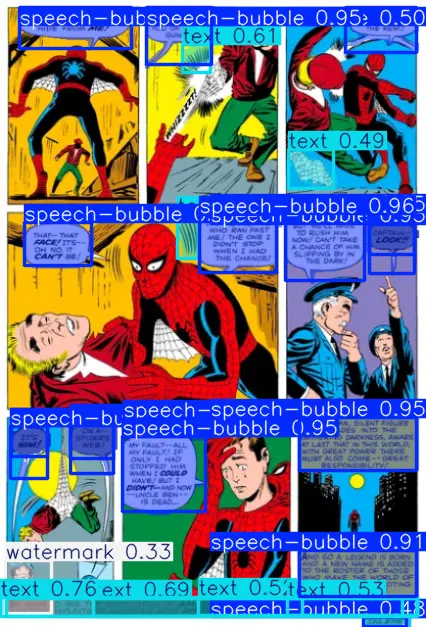

This Adetailer model will segment speech bubbles, text and watermarks commonly found in training data. Trained this so I could eventually automatically clean images in a dataset. Only tested on Comfy, but should work on other webUIs too. This is a WIP, and I have many things in mind on which could be improved:

Known issues:

make sure you don't set minimum confidence too low, or else undesired objects will be segmented

can misidentify watermarks for text, speech bubbles for logos etc. but this should not matter since they are segmented anyway

Some text that is transparent/partially hidden won't be identified

Trained primarily on NSFW images, may not work too well with comics, images with large/strange fonts etc.

Description

FAQ

Comments (10)

I have my own script to identify and run lama, but I was lacking a general purpose model, so this is great, I'll have to see how to change the bboxes for segmentations for the masks though, I'll give it a try next time, thanks!

Np! I tried coding a script that used lama, got lazy and just tried comfy. Would it be possible to share the script? I had an idea for improving quality after lama, but its not possible in comfy

As someone who only has about 4 lines of code committed to memory... I would also like to request the script along with possibly some instructions, lol

@septagon hey! did you figure this out? I'm about to try, that's why I'm asking.

Ok, I figured it out, works fine. If you are still around talk to me on discord (IndolentCat) to help you. I'd want to clean and add args before uploading publicly.

@IndolentCat sorry completely forgot to respond, I created a new workflow that works (semi) well but is still pretty lackluster. The new one utilizes both lama and SD inpainting. After that I kinda just got lazy and never went very far with a script. I'd be happy to ask some questions on discord, expect a DM from user "mistake"

Finally good stuff, how do you train models like that? That probably deserves it's own artcle.

I just recently come up with Comfy workflow that creates masks with Florence2Run and then batch inpaint images with image editor or even just python script with cv2.INPAINT_TELEA

It's hit or miss too, but manually editing half dataset is better than editing whole dataset.

https://civitai.com/articles/4080/training-a-custom-adetailer-model-with-yolov8-detection-model goes over the basics. Thanks for the tip. Yeah inpainting can work well, other times it just creates a bigger mess. Still looking for a better solution

@septagon, thanks, following article you linked i was able to create yolo8 model, it may not be precise as general purpose model but cleaning up an artist-specific mark/logo works beautifully. And considering time to create tiny dataset with yolov8 labels and to train yolov8 model it is much faster method than doing it manually on even on just 200+ images. I was surprized how fast it trained, 500 epochos in less than 10 mins.

Yes, artist mark scrubbing is bad and I should feel bad. But I hate when some pattern is annoyingly persistent on lora images.

I should also try masked loss, sounds like it's almost the same thing but i read that it may cause distortions.

@Gebsfrom404 yeah masked loss i didnt try yet, might give it a go some time. The dataset i trained this on was tiny in comparison, but I wanted the benefits of training on a variety of styles. In terms of training, id apply some augmentations, can drastically increase dataset size while increasing performance. It may not be necessary, but I trained this on yolo8x-seg, which is the best performing but most computationally intensive yolov8 model. Takes only about 2 hours though