UPDATE: v2-pynoise released, read the Version changes/notes

LORA based on the Noise Offset post for better contrast and darker images.

Weighting depends often on Sampler, kept it in the low-middle range (Maybe i will put up a stronger one).

Custom weighting is needed sometimes.

Works better if u use good keywords like: dark studio, rim lighting, two tone lighting, dimly lit, low key etc.

Didn't like the color saturation and image compositions from the theovercomer8sContrastFix_sd15 so i made this one.

UPDATE: v2 is slightly stronger in the whole composition

Update 2: added safetensor file

Quick Comparsion

Description

bigger and a bit better

FAQ

Comments (42)

This LORA will not show up in the additional networks list of A1111.

Am I doing anything wrong?

Does it show up under a completely different name?

just a guess but maybe you have the "Show only safetensors" On and this is a PickleTensor

@osakaharker That was a good idea. I took a look and it's not checked, so it should be showing other formats.

@Polygon does that help u? https://civitai.com/models/13941?commentId=40193&modal=commentThread

@epinikion Thank you, I did see that, and it seemed to work when used in the prompt. I'm trying to figure out how to show up normally in the drop down with the other LORA, though. Any ideas?

@Polygon Nope, I don’t use the additional network extension. Can have a look tomorrow maybe. Do u see it in the extra Networks after refresh?

@Polygon I've taken a look into it and i can see the Lora from the dropdown. Only thing that could be possible, is that u have not set "Only show models that have/don't have user-added metadata" under the Settings to disabled because i've not set metadata. Otherwise it should show up. Maybe it could also be the wrong path where u put it. Since Automatic1111 hast Lora build in natively i set the Setting for extra path to scan for Lora files to "C:\A1111 Web UI\stable-diffusion-webui\models\Lora", wherever your Lora files are.

@epinikion I didn't see it after a refresh. I have that option disabled. I'll try again today with the new one, thank you!

I need to merge it with model?

No, it's a LoRA. You need to either use the additional networks extension or update Automatic1111 to the latest version since it now supports LoRA natively. It works in a similar way to embeddings and hypernetworks, in the sense that it's applied to the model on the fly without modifying the file

See the "Extra network" section of this page

https://github.com/AUTOMATIC1111/stable-diffusion-webui/wiki/Features

Yes, download, put it in the "A1111 Web UI\stable-diffusion-webui\models\Lora" Folder, and use <lora:epiNoiseoffset_v2:1> in the prompt.

U can adjust the weighting with the number after : like <lora:epiNoiseoffset_v2:1.5> for stronger embedding and so on

@epinikion Thx you very much

@sheevlord Thank you

Why write like this? <lora:epi_noiseoffset2:1>

In the Tags tab, there's not this tag.

When do you use a Lora, you need to do it like this? Thanks

That’s my Lora name how I named it, when u download it from civitai it will rename the Lora to the Title and version e.g. epiNoiseoffset_v2 so u have to use <lora:epiNoiseoffset_v2:1> The easiest way to chose a Lora is from the „extra Networks“ tab https://github.com/AUTOMATIC1111/stable-diffusion-webui/wiki/Features

It is supposed to work with anime models?

Have none of them, why not test it out? It should.

please add safetensor

How many dims is this ? i've been trying to merge it with other LORAs but i think the difference in dim is causing an issue, if you can tell me how many dims i might work around it

v2 was about 96 Dimension if i remeber it correct

doesn't work RuntimeError: mat1 and mat2 shapes cannot be multiplied (154x1024 and 768x96)

I installed it and it shows up in the Lora tab, I use a checkpoint based on 1.5, I use this in my prompt <lora:epi_noiseoffset2:1> and yet I get this error message when I run my prompt.

"Couldn't find Lora with name epi_noiseoffset2"

please read here https://civitai.com/models/13941?commentId=41138&modal=commentThread

Okay thank you! I'll give it a shot. Just that I put the image in the png info and sent it to txt2img and I expected it to run as is.

@mdv yeah, sadly that doesn't work :(

@epinikion <lora:epi_noiseoffset_v2:1>

network name has to match the file name. OP gave the wrong example of the tag

@Tim_D <lora:epiNoiseoffset_v2:1> thats the correct spelling after you download it from civitai, so it is a well known "problem" for Lora names and sample prompts

Update: I edited the prompts in the samples to the namings from CivitAi

@epinikion In <lora:epiNoiseoffset_v2:1> is it necessary to mention lora or can we directly use <epiNoiseoffset_v2:1>? I am using the .pt files like embedding with my model using the textual inversion

@SparklingApps You have to use <lora: so Automatic1111 knows, that it has to use a lora here. (Looking in the right folder) Doesn‘t matter if u use .bin, .pt or .safetensor file.

@epinikion actually I am not using Automatic1111. I have integrated it with an App named ImagAIne so there is no need to use lora right?

@SparklingApps don't know what that should be

@epinikion Thank you.. Feel free to try out in this app: https://www.sparklingapps.com/imagaine and try using the lora by tagging <epiNoiseoffset_v2> in the prompt and see if its giving the results.

Just from the samples shown, V3 does look better. It is darker, but more importantly, the pictures made with it look more 'real', and the overall result is more cinematic and dramatic. V3 is the way!

Had an error in my settings, so i have to redo and see if it works out :(

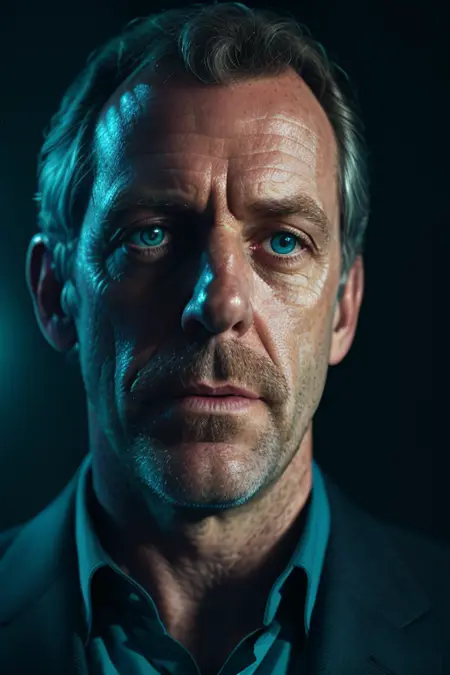

Need some assistance. Trying to generate hugh laurie image and get similar pose but without the highly detailed face. Using same image A1111 settings. model: epiHyperphotogodess_v1CLIPFix.safetensors

Lora: epiNoiseoffset_v2.safetensors

VAE: vae-ft-mse-840000-ema-pruned

prompt, seed and other same

did you use ersgan or gfpgan to increase details?

Do you have turned on face restoration? Sounds like that, I don't use it.

Any tips for darker scenes but with a soft bounce light on the model's face?

Details

Files

epi_noiseoffset2.pt

Mirrors

epi_noiseoffset2.safetensors

Mirrors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

13941_epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.pt

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

06.epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

misc_dark-epi_noiseoffset.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

lraepinoise.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

204_epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

noiseoffset.safetensors

noiseoffset.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoise.safetensors

epiNoiseoffset_v2.safetensors

epinoiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

NoiseOffset.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2.safetensors

epiNoise.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epiNoiseoffset_v2.safetensors

epi_noiseoffset2.safetensors

epiNoiseoffset_v2光影.safetensors

epi_noiseoffset2.safetensors

tesffoesionipe.safetensors

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.