Video Media Toolkit: Streamline Downloads, Frame Extraction, Audio Separation & AI Upscaling for Stable Diffusion Workflows | Utility Tool v6.0

Overview

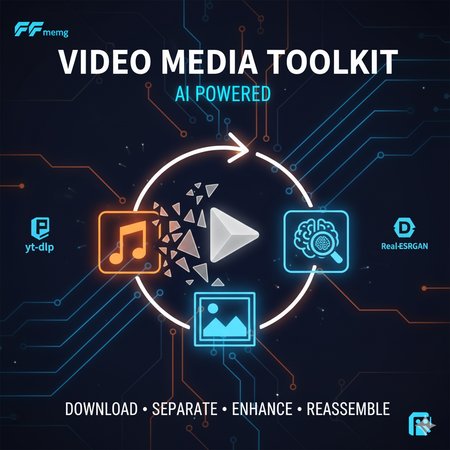

Elevate your AI art pipeline with Video Media Toolkit v6, a free, open-source desktop utility designed for Stable Diffusion creators, trainers, and video-to-image enthusiasts. This all-in-one Windows app handles media ingestion, breakdown, enhancement, and reassembly—perfect for sourcing high-quality frames from YouTube/Reddit videos for LoRA training, isolating vocals/instruments for audio-reactive generations, or upscaling low-res assets to feed into ComfyUI or Automatic1111 workflows.

Whether you're prepping datasets for Flux/Stable Diffusion fine-tuning or crafting dynamic video inputs for AnimateDiff extensions, this tool saves hours by automating tedious tasks with yt-dlp, FFmpeg, Demucs, and Real-ESRGAN under the hood. GPU acceleration supported for blazing-fast processing on NVIDIA setups.

Key Benefits:

Batch Download & Queue: Pull videos/audio from URLs or local files, output as MP4/MP3 or frame sequences (JPG/PNG) ready for dataset prep.

AI-Powered Breakdown: Extract clean audio stems (vocals, drums, etc.) or frames for training—ideal for NSFW/SFW content curation.

Enhance & Rebuild: Denoise, sharpen, upscale (2x-4x), and reassemble with stabilization for polished video outputs.

Workflow Integration: Exports compatible with A1111, ComfyUI, Kohya_ss, or Hugging Face datasets. No more manual FFmpeg scripting!

Tested on Windows 10/11; Python 3.8+ required. ~500MB install size (includes torch with CUDA fallback).

Features

Download Tab: Source & Extract Media

Input: URLs (YouTube, Reddit media, direct links) or local files.

Outputs: MP4 (enhanced video), MP3 (audio), or frame folders (e.g., frame_0001.png for SD training).

Enhancements: Resolution (360p-8K), CRF quality, FPS control, sharpen/color correct/deinterlace/denoise.

Audio Options: Noise reduction, volume norm—great for clean stems.

Queue System: Add multiple jobs, sequential processing, auto-delete sources, custom yt-dlp/FFmpeg args.

Pro Tip: Extract 1000+ frames from a 5-min video in seconds; auto-handles Reddit wrappers.

Reassemble Tab: Rebuild Videos from Frames

Input: Frame folder (e.g., from Download or external edits).

Options: Set FPS, merge audio, apply minterpolate (motion smoothing), tmix (frame blending), deshake, deflicker.

Output: MP4 with custom FFmpeg filters—export stabilized clips for AnimateDiff or video LoRAs.

Use Case: Upscale frames → Reassemble into 4K training videos.

Audio Tab: Demucs-Powered Stem Separation

Input: MP3/WAV/FLAC from downloads.

Models: htdemucs, mdx_extra, etc. (GPU/CPU modes).

Outputs: Isolated tracks (vocals, bass, drums) to subfolders—feed into audio-conditioned SD prompts.

Modes: Full 6-stem or two-stem (vocals + instrumental) for quick remixing.

Upscale Tab: Real-ESRGAN Frame Enhancement

Input: Image folder (e.g., extracted frames).

Scale: 2x/3x/4x for SD-ready high-res assets.

Output: Batch-upscaled folder—boost low-res videos to 4K for better model training.

GPU Boost: Torch-based; falls back to CPU.

Additional Utilities:

Persistent output root folder selection.

Real-time logs + file export (logs/ dir).

Dependency tester (FFmpeg, yt-dlp, Demucs).

High-contrast dark UI for long sessions.

Installation & Setup

Download: Grab the ZIP from GitHub Repo (or attach here).

Run Installer: Double-click video_media_installer.bat—auto-installs PySide6, torch (CUDA if detected), Demucs, Real-ESRGAN, etc. Handles pip upgrades.

Manual Fixes: If [WARNING] for FFmpeg/yt-dlp, download from ffmpeg.org / yt-dlp GitHub and add to PATH or hardcoded paths.

Model Download: Place RealESRGAN_x4plus.pth in /models/ for upscaling (link in README).

Launch: Double-click launch_video_toolkit_v6.bat. Sets output folder on first run.

Test: Use "Test Dependencies" button—aim for all [OK].

Compatibility Notes:

Windows Focus: Bat launchers for easy setup; Linux/macOS via manual Python run.

SD Integration: Frames export as numbered sequences (e.g., %04d.png) for direct import into Kohya or DreamBooth.

No A1111 Extension: Standalone app—pair with ControlNet for video-to-image pipelines.

Warnings: Large files may need 8GB+ RAM; GPU recommended for Demucs (else CPU is slow). NSFW content handled per source policies.

Usage Examples

LoRA Training Prep: Download anime clip → Extract PNG frames → Upscale 4x → Use in Kohya_ss dataset.

Audio-Reactive Art: Separate song vocals → Generate SD images with "vocal waveform" prompts.

Video Dataset: Batch-download 50 YouTube vids → Frames + stems → Train Flux on motion data.

Changelog (v6 Highlights)

Enhanced Reddit URL parsing.

Queue improvements + custom args.

Dark theme with better readability.

Bug fixes for Demucs GPU detection.

Description

Video Media Toolkit v7: Download, Decompose & Rebuild Media with AI Precision

Overview

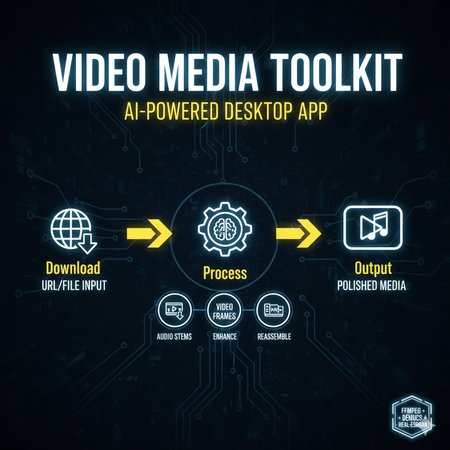

Level up your AI art and media workflows with Video Media Toolkit v7, a free, open-source desktop utility for creators, editors, and dataset builders. This all-in-one Windows app automates the entire video/audio processing pipeline—download, extract, enhance, and reassemble—making it indispensable for Stable Diffusion, AnimateDiff, RVC, and other AI-driven projects.

Version 7 introduces Speaker Diarization (pyannote.audio), improved Demucs stem separation, a virtual environment auto-installer, and expanded GPU acceleration for faster processing. Whether you’re prepping LoRA datasets, isolating voices for AI dubbing, or upscaling low-res frames for 4K model training, this toolkit cuts through hours of manual FFmpeg scripting.

🔧 Key Features

1. Download Tab — Source & Extract Media

Pull videos or audio from YouTube, Reddit, or local files.

Export as MP4, MP3, or frame sequences (JPG/PNG).

Apply resolution (360p–8K), FPS, sharpening, denoise, and color correction.

Queue multiple jobs for batch automation with real-time progress logs.

2. Reassemble Tab — Frame-to-Video Rebuilding

Combine image sequences into stabilized, high-quality videos.

Merge with separated audio or re-mixed stems.

Filters: minterpolate, tmix, deflicker, deshake for cinematic smoothness.

3. Audio Tab — Demucs AI Stem Separation

Isolate vocals, drums, bass, and other instruments.

Supports 2-stem (vocals + instrumental) or full 6-stem separation.

GPU or CPU modes with automatic model management.

4. Upscale Tab — Real-ESRGAN Image Enhancement

Upscale extracted frames 2x–4x with advanced AI clarity.

Great for low-res sources or preparing datasets for ComfyUI & A1111.

5. Diarize Tab — Speaker Separation (New in v7)

Identify and extract individual speakers using pyannote.audio.

Requires free Hugging Face token (one-time setup).

Automatically merges detected speech clips into organized audio files.

🚀 Performance & Compatibility

Fully integrated with yt-dlp, FFmpeg, Demucs, Real-ESRGAN, and pyannote.audio.

GPU acceleration via CUDA for blazing-fast upscaling and separation.

Tested on Windows 10/11 (Python 3.8+).

Works seamlessly with Stable Diffusion, Kohya_ss, ComfyUI, and AnimateDiff workflows.

🧩 Installation

Download and extract the toolkit.

Run

video_media_installer.bat— it auto-creates a Python virtual environment and installs dependencies.Ensure FFmpeg and yt-dlp are installed and on your PATH.

Launch the app with

launch_video_toolkit_v7.bat.

Optional: Add your Hugging Face token in the Diarize tab to unlock AI speaker separation.

💡 Use Cases

Extract frames → Upscale → Reassemble → Train LoRA.

Separate vocals → Generate AI music → Sync to visuals.

Diarize interviews or podcasts → Train voice-based AI models.

Build video datasets for motion-aware models (Flux, AnimateDiff, etc.).

📝 Changelog (v7 Highlights)

New Speaker Diarization Tab powered by pyannote.audio.

Simplified installer with venv isolation for clean dependency management.

Optimized GPU utilization across all major tabs.

Enhanced stability, dark theme readability, and dependency diagnostics.

Video Media Toolkit v7 — your complete command center for media-to-AI workflows.

Download, dissect, and rebuild creative assets with zero friction.

Looks like we don't have an active mirror for this file right now.

CivArchive is a community-maintained index — we catalog mirrors that volunteers upload to HuggingFace, torrents, and other public hosts. Looks like no one has uploaded a copy of this file yet.

Some files do get recovered over time through contributions. If you're looking for this one, feel free to ask in Discord, or help preserve it if you have a copy.