NEW LTX-2 Workflows here: https://civarchive.com/models/2318870

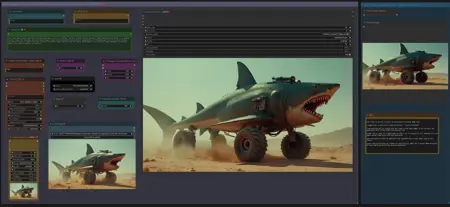

Workflow: Image -> Autocaption (Prompt) -> LTX Image to Video

LTX Prompt Enhancer (LTXPE) might have issues with latest Comfy and Lightricks update

Update July 20th 2025: GGUF Models for LTX 0.9.8:

Distilled model, works with V9.5: https://huggingface.co/QuantStack/LTXV-13B-0.9.8-distilled-GGUF/tree/main

Dev model, works with V9.0: https://huggingface.co/QuantStack/LTXV-13B-0.9.8-dev-GGUF/tree/main

(see "Model Card" in above links for LTX 0.9.8 VAE and textencoder downloads)

V9.5: LTX 0.9.7 Distilled Workflow supporting LTX 0.9.7 Distilled GGUF Model.

There is a workflow with Florence and another one with LTX Prompt Enhancer (LTXPE)

GGUF Model can be downloaded here:

https://huggingface.co/wsbagnsv1/ltxv-13b-0.9.7-distilled-GGUF/tree/main

VAE and Textencoder are identical to previous LTX 0.9.6 model (see V8.0 below)

LTX 0.9.7 Distilled is using only 8 steps and is very fast.

V9.0: LTX 0.9.7 Workflow supporting LTX 0.9.7 GGUF Model.

There is a workflow with Florence and another one with LTX Prompt Enhancer (LTXPE)

GGUF Model can be downloaded here:

https://huggingface.co/wsbagnsv1/ltxv-13b-0.9.7-dev-GGUF/tree/main

VAE and Textencoder are identical to previous LTX 0.9.6 model (see V8.0 below)

LTX 0.9.7 is a 13billion parameter model, previous versions only had 2b parameters, therefore it is more heavy on Vram usage and requires longer process time. Try V8.0 below with model 0.9.6 or V9.5 for very fast rendering.

V8.0: LTX 0.9.6 Workflow (dev and distilled GGUF model in same workflow)

there is a version with Florence2 Caption and a version with LTX Prompt Enhancer (LTXPE)

GGUF Models (Dev & Distilled) can be downloaded here:

https://huggingface.co/calcuis/ltxv0.9.6-gguf/tree/main

vae: pig_video_enhanced_vae_fp32-f16.gguf

Textencoder: t5xxl_fp32-q4_0.gguf

V7.0: LTX 0.9.5 Model Version GGUF with Wavespeed/Teacache.

LTX 0.9.5 GGUF Model and VAE: https://huggingface.co/calcuis/ltxv-gguf/tree/main

(vae_ltxv0.9.5_fp8_e4m3fn.safetensors)

Clip Textencoder: https://huggingface.co/city96/t5-v1_1-xxl-encoder-gguf/tree/main

There are 2 worklfows, a main workflow with florence caption only and additional one with florence and LTX prompt enhancer. Setup with Wavespeed (bypassed by default, Strg+B to activate)

workflow works with all GGUF models: 0.9 / 0.9.1 / 0.9.5

uncensored LLM for Prompt enhancer: https://huggingface.co/skshmjn/unsloth_llama-3.2-3B-instruct-uncenssored

-Outdated (march 2025)- V6.0: GGUF/TiledVAE Version & Masked Motion Blur Version

Updated the workflow with GGUF Models, which save Vram and run faster.

There is a Standard Version, which uses just the GGUF Models and a GGUF+TiledVae+Clear Vram Version, that reduces Vram requirements even further. Tested the larger GGUF model (Q8) with resolution of 1024, 161 frames and 32 steps , the GGUF Version peaked Vram usage at 14gb, while the TiledVae+ClearVram Version peaked at 7gb. Smaller GGUF Models might reduce requirements further.

GGUF Model, VAE and Textencoder can be downloaded here:

(Model&VAE): https://huggingface.co/calcuis/ltxv-gguf/tree/main

(anti Checkerboard Vae): https://huggingface.co/spacepxl/ltx-video-0.9-vae-finetune/tree/main

(Clip Textencoder): https://huggingface.co/city96/t5-v1_1-xxl-encoder-gguf/tree/main

You can go for the GGUF Version with 16gb+ and the TiledVae+ClearVram with less than 16gb Vram.

Masked Motion Blur Version: Since LTX is prone to motion blur, added an extra group to the workflow which allows to set a mask on input image, apply motion blur to mask, to trigger specific motion. (sounds better than it actually works, useful tho in some cases). GGUF and GGUF+TiledVAE+ClearVram version included.

V5.0: Support for new LTX Model 0.9.1.

included an additional workflow for LowVram (Clears Vram before VAE)

added a workflow to compare LTX Model 0.9.1 vs LTX Model 0.9

(V4 did not work with 0.9.1 when the model was released (hence v5 was created), this has changed as comfy & nodes were updated in the meantime, now you can use both Models (0.9 & 0.9.1) with V4, also with V5. Both have different custom nodes to manage the model, other than that, both versions are the same. If you run into memory issues/long process time, see tips at the end)

-Outdated (march 2025)- V4.0: Introducing Video/Clip extension :

Extend a clip based on last frame from previous clip. You can extend a clip about 2-3 times before quality starts to degenerate, see more details in the notes of the worflow.

Added a feature to use your own prompt and bypass florence caption.

V3.0: Introducing STG (Spatiotemporal Skip Guidance for Enhanced Video Diffusion Sampling).

Included a SIMPLE and an ENHANCED workflow. Enhanced Version has additional features to upscale the Input Image, that can help in some cases. Recommend to use the SIMPLE Version.

replaced the height/width Node with a "Dimension" node that drives the Videosize (default = 768. increase to 1024 will improve resolution, but might reduce motion, also uses more VRAM and time). Unlike previous Versions, Image will not be cropped.

Included new node "LTX Apply Perturbed Attention" representing the STG settings (for more details on values/limits see the note within the workflow) .

Enhanced Version has an additional switch to upscale Input Image (true) or not (false). Plus a scale value (use 1 or 2) to define the size of the image before being injected, which can work a bit like supersampling. As said, not required in most cases.

Pro Tip: Beside using the CRF value at around 24 to drive movement, increase the frame rate in the yellow Video Combine node from 1 to 4+ to trigger further motion when outcome is too static.

Node "Modify LTX Model" will change the model within a session, if you switch to another worklfow, make sure to hit "Free model and node cache" in comfyui to avoid interferences. If you bypass this node (strg-B) , you can do Text2Video.

V2.0 ComfyUI Workflow for Image-to-Video with Florence2 Autocaption (v2.0)

This updated workflow integrates Florence2 for autocaptioning, replacing BLIP from version 1.0, and includes improved controls for tailoring prompts towards video-specific outputs.

New Features in v2.0

Florence2 Node Integration

Caption Customization

A new text node allows replacing terms like "photo" or "image" in captions with "video" to align prompts more closely with video generation.

V1.0: Enhanced Motion with Compression

To mitigate "no-motion" artifacts in the LTX Video model:

Pass input images through FFmpeg using H.264 compression with a CRF of 20–30.

This step introduces subtle artifacts, helping the model latch onto the input as video-like content.

CRF values can be adjusted in the yellow "Video Combine" node (lower-left GUI).

Higher values (25–30) increase motion effects; lower values (~20) retain more visual fidelity.

Autocaption Enhancement

Text nodes for Pre-Text and After-Text allow manual additions to captions.

Use these to describe desired effects, such as camera movements.

Adjustable Input Settings

Width/Height & Scale: Define image resolution for the sampler (e.g., 768×512). A scale factor of 2 enables supersampling for higher-quality outputs. Use a scale value of 1 or 2. (changed to dimension node in V3)

Pro Tips

Motion Optimization: If outputs feel static, incrementally increase the CRF & frame rate value or adjust Pre-/After-Text nodes to emphasize motion-related prompts.

Fine-Tuning Captions: Experiment with Florence2’s caption detail levels for nuanced video prompts.

If you run into memory issues (OOM or extreme process time) try the following:

use the LowVram version of V5

use a GGUF Version

press "free model and node cache" in comfyui

set starting arguments for comfyui to --lowvram --disable-smart-memory

see the file in your comfyui folder: "run_nvidia_gpu.bat" edit the line: python.exe -s ComfyUI\main.py --lowvram --disable-smart-memory

switch off hardware acceleration in your browser

Credits go to Lightricks for their incredible model and nodes:

Description

LTX 0.9.7 with Florence2 caption

FAQ

Comments (19)

What resolution can it generate?

default is 768. 1024 works as well.

for some reason my video combine node doesnt display crf widget, workflow works but i either get no motion or too much of it, i assume its due to the lack of crf option on my end for whatever reason

does anyone know how to go about fixing this?

thanks for this great workflow. is there anyway to increase length of video?

yes, see the "video length" node, set to 97 (3sec). Other usable values: 129 (5sec) or max 257 (10sec). Check the experimental workiflow, it can extend clips as well.

I get OOM issues somewhat frequently with 16GB, but I saw your comment that 8GB was able to generate 10 sec videos.

What are the parameters/inputs that would impact that VRAM usage most? Input image size? Or something else?

8gb Vram was confirmed to work, most impact on usage probably goes to dimension and video length.

Increase your page file size. If you have plenty of headroom, then increase it to 100gb. You can also use a different drive than the one your OS is on if you're running out of room.

@tremolo28 I cut down the output video dimensions and saw more success, although it was still hitting OOM for some generations.

@Tsterbta I'll have to check on that next. I had already upped to 32GB as that was my RAM size. But I'll play around with higher values.

Thanks to you both.

Thx a lot for the v3 and experimental workflows.

I do have two questions:

1.

How do i set the input picture resolution to it's matching scale here in dimension node?

Like source is height 1536 x width 1024

Do i have to add 768 (height) then into the dimension node if i want to keep this exact input aspect ratio?

I do not understand the dimension node atm.

2.

The crop nodes are unclear to me:

Do i have to deactivate these so it uses the native picture dimensions and not just a frame like "cropped", is this correct?

I want to use the identical picture input to animate it without skipping anything of it's details and content.

Dimension set to 768 means : i.e. your input image has a size of 1536x1024, the output video will be 768x512. So it takes the highest value of either width or height of Input Image and matches it to the dimension, no matter landscape or portrait. Image will not be cropped, Input Image is processed uncropped and by maintaining aspect ratio.

Thank you for this. Great work!

There is a few things I can't really understand, one is why you are using such wierd custom nodes for such simple variables like INT, FLOAT, STRING, etc.

I think it would be better to use the core nodes for these simple variables as I think a lot of people, me included, don't like to download and use libraries we don't actually need.

Also, the placement of your groups could improve to avoid the spaghetti which hurts the eyes and creates confusion just by looking at it.

Just a few inputs, otherwise, great work! Thank you!

You are right, i dont like it either to have those simple nodes from different packs in the workflow, while there are core nodes,I have just been too busy to clean that.

Regarding spaghetti, just hide them with a click.😄

Very good workflow, when I use videohelpersuite to load the video, skip the previous frame, only keep the last frame, and then connect to the next workflow, I found that after each video, there will be a slightly worse chromatic aberration than the original image, so I added a ColorMatch node to refer to the original image, in order to keep each output video to avoid chromatic aberration as much as possible, I don't know if there is any other way, of course, you can also use pr to correct

Thanks , will try with colormatch on the experimental workflow to extend clips based on last frame. The clips seem to degenerate slowly, leaving the last frames at slightly lower quality. Was fiddling around with detail daemon to enhance last frames, but did not make a difference, also tried sharpen and upscale… Will try cm.

tried Colormatch, but did not improve quality of last frame. Anyway also tried videohelpersuite to access last frame, there the color was off, like u described it. You dont have this issue if you use the "final frame selector" node from mediamixer custom nodes, see my experimental workflow.

Great workflow.

How do you set it up to produce high quality, non-destructive videos like those posted in the gallery?

Thanks. You can follow the infos in the description. Also check the experimental workflow, it contains some further infos in the notes. The LTX model is very fast, but not as sophisticated like Sora, so you will get destructive and low quality clips in some cases. It is more a thing of cherrypicking the best results. Sometimes it takes 3-5 generations to get where you want, but generation time can be under 1min.

@tremolo28 Thank you for the advice.

The images I generate have a strong blur, so I feel like they tend to break down easily.

I was generating the images at 9:16, but I found that cropping them to 1:1 or 3:4 makes them less likely to break down.