Nunchaku Fluxmania Legacy (by Spooknik)

Select Pruned model fp16 (6.3 Gb)

KREAMANIA

I don't consider Kreamania to be a truly new model, it's just a slight improvement on Legacy, injecting a tiny bit of Krea (which, in my opinion, isn't a benchmark for realism) and adding some of my recent LoRA models. It deserved the name Legacy+ more than Kreamania.

I find this version provides more detail and improved lighting. The composition remains almost identical to that of Legacy.

Note : Don't use "freckles" in the prompt

Oddity : Unexpected appearance of text

https://civarchive.com/models/1808412/wan-refine-fluxmania

https://civarchive.com/models/1808412/wan-refine-fluxmania

https://civarchive.com/articles/17346/wan-refining-fluxmania-for-more-realism

Quantized versions of the Fluxmania Legacy model:

https://huggingface.co/belisarius/FLUX.1-dev-Fluxmania-Legacy-gguf/tree/main

Showcase images are in PNG and include metadata, prompt & workflow.

Showcase images are in PNG and include metadata, prompt & workflow.

All licensing conditions for the Flux Dev model apply. You can find the details here.

Model orientation : Photography - Realism - Portraits - Artistic nude

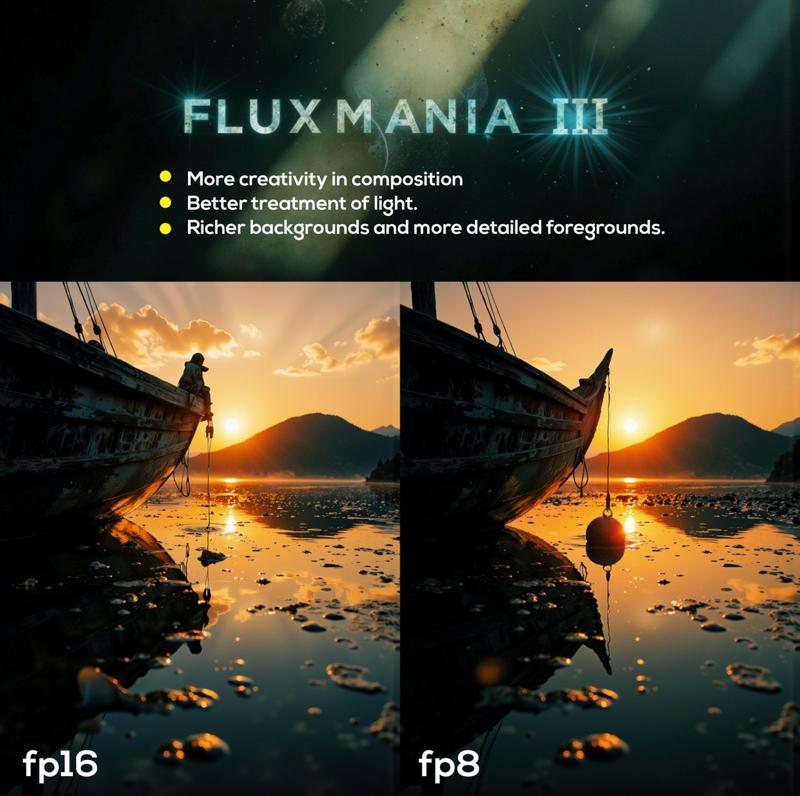

Fluxmania III :

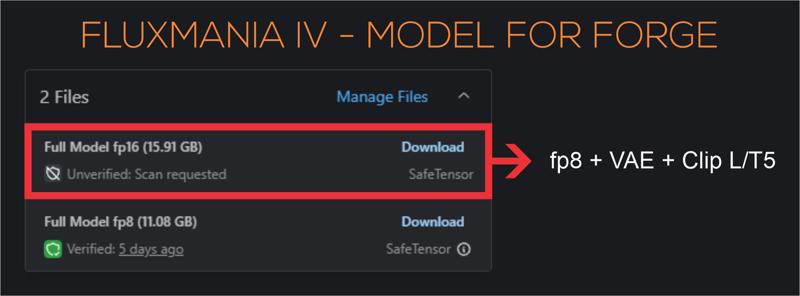

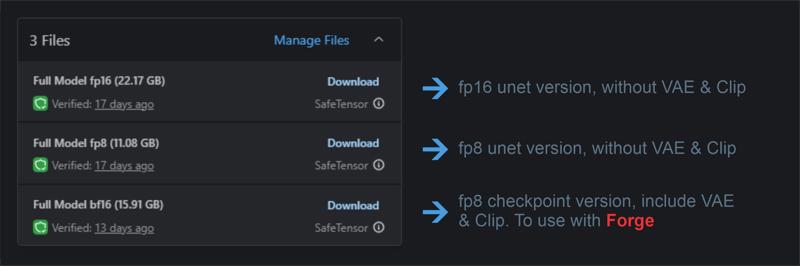

File 22.07 GB : unet fp16 without VAE & clip

File 22.07 GB : unet fp16 without VAE & clip

File 11.16 GB : unet fp8 without VAE & clip

File 15.91 GB : checkpoint fp8 (not bf16) with VAE & Clip L full fp32

Settings : dpmpp_2m - sgm_uniform / cfg 3.5 / steps 25 - 30.

I recommend using this version of Clip L with the Fluxmania model

Fluxmania I & II : Unet fp8 (no VAE, no clip) / Settings : dpmpp_2m - sgm_uniform / cfg 3.5 / steps 25.

Genuine : Unet fp16 (no VAE, no clip) / Settings : Euler Simple / cfg 3.5 / steps 30.

Description

Recommended settings : dpmpp_2m / sgm_uniform / 25 steps / flux guidance : 2.5 to 3.5.

Recommended associated lLora : Defluxion II

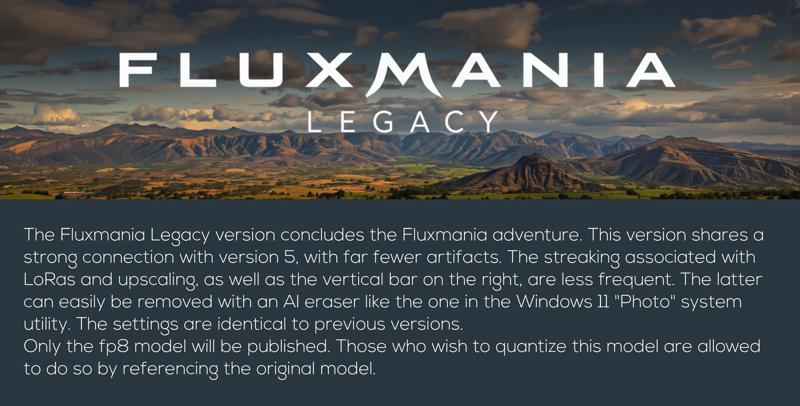

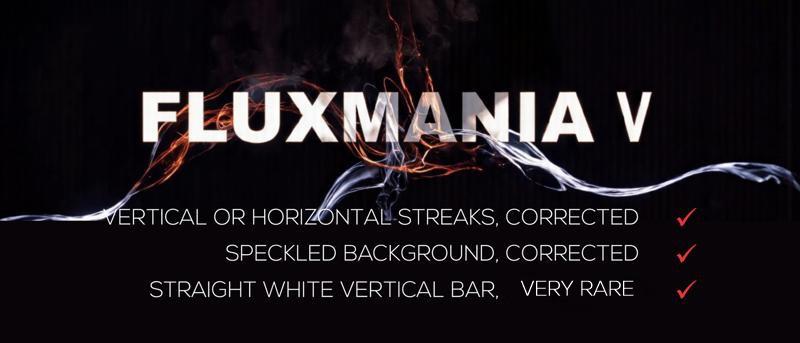

Version 5 compiles several models, if you ever notice the appearance of the vertical bar on the right, it is probably because you have used an existing LoRA in fluxmania V. It is recommended to significantly reduce its weight to make this artifact disappear.

Example: Fluxmania V integrates Fluxartis II, if you still want to use this LoRA, go from the recommended weight of 0.6 to 0.3

FAQ

Comments (93)

Will you plan to upload the full bf16 version of V5?

Fluxmania V is composed of 13 LoRA and 2 checkpoints, it's impossible, I don't have enough VRAM to do bf16 or fp16.

@Adel_AI no worries, will test It thank you, btw what other checkpoint did you use for it? And last question, the samplers and cfg should be the same as version 4 right?

@P_Universe For the checkpoints, I used Flux1.D and Real Flux.

Yes, sampler and cfg similar to version 4.

@Adel_AI thank you, I Hope you can add in the future ultrareal finetune and the next juggernaut flux 🙌🏻

@Adel_AI you did it !

All renders blurred in Forge with Fluxmania V (with recommended settings - dpmpp_2m/sgm_uniform / 25 steps / flux guidance : 2.5-3.5)

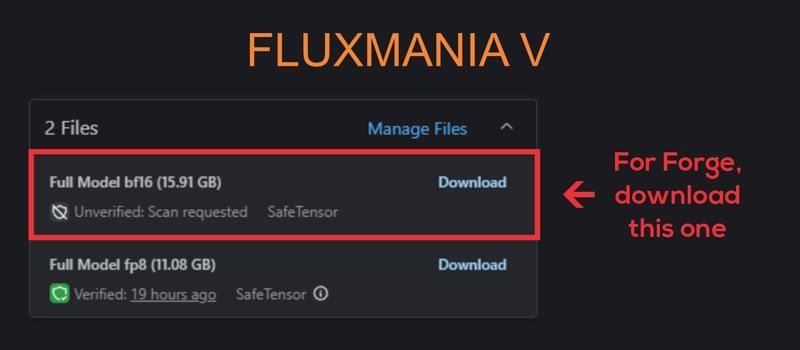

It's blurred because the model is a unet without VAE, and Forge doesn't support unet. I should publish a checkpoint integrating Clip and VAE, I'll do it soon.

The settings I mentioned are for ComfyUI; feel free to experiment with other settings on Forge.

the checkpoint is now available, it is the one designated "Full model bf16" with 15.91 Gb

In Forge, you connect the Clip and VAE separately. There's nothing wrong with that. For some reason CFG 3.5 gives mostly blur results there. CFG 2-2.5 makes the results better.

@tigerart This is still odd, because varying cfg from 2.5 to 3.5 shouldn't impact the rendering that much; there's a variation, but not to the point of completely blurring the rendering.

I'm not a Forge user, so sorry I can't help you. But keep experimenting with other settings; you'll probably end up finding the right settings window.

Also test the new checkpoint, maybe it will give better results. I've always been told that Forge handles checkpoints better than unet.

@Adel_AI Is the quality any different between the two download versions you've listed for V? I see you've added the Clip and VAE for the larger model, but is the smaller model just as good—just without the extra files?

@97Buckeye i(ts exactly the same model

@Adel_AI thank you for your hard work. I use forge too. I like comfy and forge is missing a lot of features but im just a gui type person. Fluxmania 3 has been the only checkpoint ive been using lately. Gonna give this one a shot.

@cutetodeath78409597 thanks for the feeback and give us good results with this new version

Same here, setting the CFG scale above 1 in Forge generates blurred images. Gens look great at CFG 1 and distilled CFG 3.5 though.

@WVVWVVW it might be time to switch to comfyui, Forge is no longer updated, it's hard for non-nodal interfaces to keep up. ComfyUI is establishing itself as the essential interface in open source and even beyond this sphere

nickel adel ta bien bosser ;) merci

Merci l'ami

i have a black image when using ClipL. i'm using rtx 4060 on comfyui Offline. When i try other clip model it work but image generated are bad quality

What interface are you working on and which model did you download?

Clip doesn't produce a black image.

If you're on Forge, you need to download the 15GB model; it's a package that includes VAE and Clip. Forge doesn't handle models in unet format well.

@Adel_AI i'm on Comfyui on Linux. The 15Go worked for me but not the other.

@yurri31 If you are on ComfyUI both versions should work.

How long does it take for you on the 4060? I wish it was mandatory to put how many VRAM you need on the description of every module.

Which version of Fluxmania V should I get, if I'm already using a vae and clip, please?

The 11 GB file contains only the Unet, intended primarily for ComfyUI.

The 15 GB file is a ckpt, containing unet + VAE + Clip, use with Forge.

@Adel_AI Okay! Thank you very much :) I love this btw.

@Adel_AI Hey I dont quite get this, shouldnt the fp16 version also have a higher base quality even without clip and vae included? Or is the only difference for this model that one got vae + clip and the other doesnt so both are fp8?

@melvinmicky All models are FP8. I'm using the FP8-based Checkpoint as BF16 so I can put the model on the same page. Civitai doesn't allow you to publish two FP8 models on the same page and doesn't offer the ability to distinguish between Unet and Checkpoint.

I explained this in the comments.

My PC configuration doesn't allow me to run an FP16 for version 5 because it requests 15 models. I'm running into a memory error.

@Adel_AI Ah got it, wondering when you say you aint got the config to "run" an fp16 model you mean train? are the required specs for training the same as for using? so if i would want to train an fp16 version of flux would i only need something like 16 gb vram?

@fox_trot Good question, you're right, there must certainly be some stupidity in the air.

Apparently you're not a ComfyUI user. If you were, you'd know that with this interface, you don't need Clip and VAE associated with the Unet. It allows you to choose your own version of Clip and possibly VAE. You only need the Unet and nothing else.

@Adel_AI Uh, Forge also allows you to choose VAE and Clip.

@sd2472 I'm not a Forge user, I just picked up what users of this interface tell me. They can't get correct renderings with unet, perhaps even with the possibility of selecting VAE and clip separately. In any case, comfyui is increasingly positioned as the essential interface for open source models

Really enjoying this model. Just wanted to note that I was getting severe banding with the flux canny lora, doesn't seem to happen with the depth lora.

If streaks appear, it is recommended to lower the intensity of the CN, this is also valid for LoRA. Personally, I almost never use CN, I prefer to act on the noise rather than on the model to orient the rendering direction.

Thank you for the feeback

@Adel_AI What do you mean by acting on the noise? Sounds interesting. I would love to try this myself.

@mmdd2543 There are several different methods for influencing the latent. The three main approaches I use to orient my latent are:

- Use i2i to obtain the latent with significant denoising between 0.85 and 1.0

- Combine latents or inject noise into the latent

- Use unsampler to generate the latent

Why does it says "Updated" when it's still version V ?

At the end of an early access, the model is displayed as "updated"

I use stable-diffusion in house automatic1111, downloaded the 15.92 gig file but I can only produce blanks, is it not compatible ? Neophyte here, Love the detail of your images

Haven't tried any flux checkpoints. Are they any better than original flux.dev model?

Flux1.D is a good base model, but almost devoid of any style and the human renderings have a somewhat plasticky skin appearance. The backgrounds are systematically blurred and almost no variation in the faces. The Flux models, both those trained and merged, bring variation and correct the shortcomings of the base model.

Of course, this one fluxmania is in my top 3, then you have ultrarealism finetune, and I would say pixelwave

This is my first time to try this checkpoint, let me share my results.

you used Flux1.D and not Fluxmania

It seems it's not working, right now, your checkpoints and other ones are available to choose for web-based generation, however the default Flux1.D is used regardless the choice of checkpoint.

Hmmm, sry for bothering...

@toshakin There is no problem. I suspected there was a malfunction with the new Civitai formula. Although Fluxmania was selected among the 200 chosen ckpt, there was no online generation made with my model, while some had spent buzz to select it, it seemed illogical to me. This bug will probably have to be reported to Civitai

is there a GGUF version of this?

Only fp8

Doesn't seem to recognize VAE, etc in InvokeAI. Tried both versions. Bummer.

Apart from comfyUI, all other interfaces have issues with Flux models.

Where can i find a sample workflow?

all my images integrate their workflow, you just have to drop an image on the comfyui interface

plz try to fix the problem of upscaling, there is lines and squares after upscale, its very sad to achieve this level of human texture and details and we can't use it to upscale :((((

Yes, indeed, this problem was mentioned to me during the upscaling. I admit that I never or very rarely do it, and I didn't have the time to test it. However, I think that if the streak artifacts appear during the upscaling, it's because they already existed very weakly in the original image and are greatly accentuated during the upscaling. It's necessary to resolve the underlying issue, for example by lowering the Loras weighting (if applicable), testing other seeds, etc.

Other avenues to explore include using different upscaling models.

If I find the time to work on it, I'll get back to you.

I have the same problem and I can say, that it depends on the FluxGuidance value. If I lower it to 1 or less they disappear. But than the Image loses quality.

Edit: Ultimate SD Upscaler in ComfyUI seems to work. I will post some pictures with the workflow included. But this is quick and dirty. Maybe there are better solutions.

@Pressydent for guidance preferably do not go below 2.5

@Adel_AI hi sir, hope you are fine, i want to ask you if there is a possibility to make a Kontext Lora for realistic image plz ? thank you

I am very pleased with the output quality and level of detail of this model. However, loading the model ("manual cast: torch.bfloat16" process) takes a very long time... Also, sometimes very light vertical lines appear in the sky.

The problem of streaks can appear if you use lora, in which case it is enough to lower their weight to make these streaks disappear. If these streaks appear without lora, I lower the guidance a little and generally they disappear, we can also vary the seed. I noticed that high contrast and dark images were more prone to this artifact. Thank you for your appreciation and feedback

Great work!

Hi! what's the difference between fp8 and bf16? (I use Invoke)

FP8: It uses only 8 bits, which makes it smaller and faster to process but less precise. It's ideal for reducing memory usage and speeding up operations

BF16: It uses 16 bits and provides higher precision compared to FP8. It strikes a good balance between accuracy and performance, making it suitable for training large models where precision is crucial.

In short, FP8 prioritizes speed and memory efficiency, while BF16 focuses on better precision with reasonable performance.

I'm only a beginner but I can't get this to work. I have about ten checkpoint models in total (including Flux dev fp8) but fluxmania is the only one which won't run using a basic workflow in ComfyUI. I get the error "ERROR: clip input is invalid: None" which seems to come from the negative text prompt? Any help appreciated

Hi, all my images are in PNG with WF included, try to recover one of my workflows to test with, there is no reason why it should not work (no need for negative prompt)

I got this working by loading it as a Diffusion Model along with a Load VAE and DualCLIPLoader (basically the same workflow as you'd use for Flux1-dev but swapping out the diffusion model) so I'm still confused about why this is listed as a Checkpoint Merge. The terminology is not very clear, why not just call this a modified diffusion model? Hope this helps anyone else struggling trying to get this working. By the way, the results look very nice thanks!

@kennysladefan293 Same no where does it say where to save this model. Does not load properly with png images on here. I assume load ckecpoint is the only one that works with this in comfyui???

Such a great model, thank you for this!

thanks for your feedback

Hello and thanks, just to understand, which one is better for Forge and wich one for ComfyUi? Thanks

Hi, all models can be used on comfyui, I am not a forge user but I think the 16Gb model is the most suitable, it integrates Clip & VAE

@Adel_AI Thank you!

I am using the fp8 (11.08Gb) version very satisfactorily in Forge with ae.safetensors + clip_l.safetensors + t5xxl_fp8_e4m3fn.safetensors loaded in the VAE/Text Encoder box.

Many images are generated with version V in very good quality in ComfyUI. But as soon as fog or clouds come into play, I get clearly visible vertical banding, even with your workflow. I tested it with the same prompts in ForgeUI and got the same banding.

If you can get this under control, it will certainly become a very good model.

Yes, I was told about this problem with version 5, and this artifact also affects the upscale. On my side, the vertical streaks only appear when using LoRA. By adjusting the LoRA weights properly, I can get rid of these vertical streaks. The model alone without LoRA has never generated vertical streaks for me. On the other hand, the vertical whitish trace that sometimes appears on the right remains random and without a solution. I know it's annoying. But I'm facing 2 problems, I'm short of time (it took me 2 months to get V5) and the appropriate nodes to properly test a model don't yet exist in comfyui. When you test a merge of ckpt and lora directly in a WF, you get a result, and when you save the model, you get a radically different result. This gap requires to save the model and test it, which makes the procedure extremely tedious and long.

@Adel_AI So far I only have one solution for the streaks, which I use for images that I want to enlarge as 4K wallpaper. I use the Redifine option in Topaz PhotoAI with Creativity 3 and Prompt. This takes about 3 minutes on my RTX4060 and makes the stripes disappear completely in most cases.

I realize that you can't just create a new model on the fly. I will simply wait for the future and until then only use Fluxmania for prompts where there are no problems.

I think it is the best Flux checkpoint (V).

thanks

I agree this is the best. Probably said that already, but doesn't hurt to say it again.

@axlaim It must also be admitted that it has its flaws, particularly the streaking which increases with the upscale. I have been trying for a few weeks to work on an ultimate version of fluxmania, while keeping a small connection with version 5 and ridding it of this very annoying artifact.

I am really in love with this checkpoint of Flux...it generates superb images everytime for me, one query though, I downloaded your Old man Workflow, you seem to be using T5_F8 and a ViT clips I don't see the clip_l you recommend, any ways I downloaded "A" clip from the recommended page , the 1st of the 6 of them "clipLCLIPGFullFP32_zer0intVisionCLIPL", I hope I have the right one, please confirm, thanks again for your superb effort!!!

Hi, for the file "CLIP-L & CLIP-G Full FP32" here is the link, there is only one file

https://civitai.com/models/1044804/clip-l-full-fp32-zer0int-and-simv4

Thanks for your feedback

Can i somehow quantize this model?

Yes, you have my permission.

Great checkpoint. The only downside I found is that it makes people much younger when I compare with Flux Dev.

Ah, I hadn't noticed the rejuvenation treatment that the model is practicing, I'll keep an eye on that. Maybe I can sell it in the cosmetics section

Hi Adel, how are you? I'm writing because I'd like you to make me a model based on Fluxmania V, IV, and III. I don't have the chat available here, and I don't know if it's paid, so if there's another way I can contact you, that's even better. Thanks.

Hi, Thank you for your interest in Fluxmania. It would have been a pleasure to develop a model for you, unfortunately I do not have the time to undertake this kind of commitment. Fluxmania V required 2 months of testing, I am currently working on the next version, it will be almost 1 month and I have not yet completed this work. My professional commitments leave me very little time for model development. Another detail, the development of a merge model is very personal, because there are compromises to be made, so the choices are very subjective, which is not the case for the development of a LoRA which is more technical.

@Adel_AI Greetings! Well, I understand what you're saying about time, but if you ever have the time to do it, that would be great. I mean, if I could use another one, I wouldn't write to you. I'm writing because I want one of yours, really, They fit very well to what I need. I don't care if I have to wait three months, I'll wait. Regarding compromises, what's that about?

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.