SVI Extend

https://github.com/vita-epfl/Stable-Video-Infinity/tree/svi_wan22

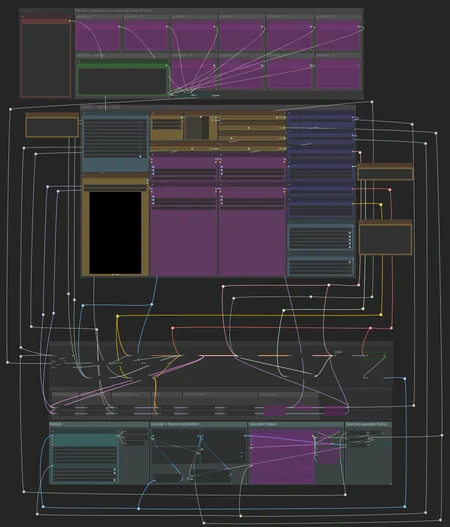

Create videos and extend them seemlessly using SVI.

Following SVI LoRAs are mandatory:

switch between default behaviour, anchor_samples and end_frames within the same subgraphs

connect an image to a part and enable the respective toggles to use end_frames or anchor_samples

NEW! v3

Extend existing videos using https://github.com/wallen0322/ComfyUI-Wan22FMLF

enable "video extension" toggle inside the settings

uses source video resolution by default

rescale video using the megapixel slider by enabling "video rescale" toggle

use included version of the nodes from inside .zip or download the latest version straight from the git if issues arise

More info inside the workflow.

AIO i2v+t2v

All in One workflow for for basic WAN 2.2 video generation.

Following features included:

Switch seemlessly between 2 and 3 sampler solutions

Toggle between i2v or t2v

Postprod

Facedetailer

uses t2v Model + LoRA for inpainting - resources needed included in workflow

Toggle between GIMM VFI and RIFE VFI Interpolation

Upscale

Tensorrt Upscale with Model

Basic Video Upscale with Model

RTX Video Super Resolution Upscale (insanely fast for decent quality)

Frame Clipper

Seamless Loops using custom RIFE nodes https://github.com/Artificial-Sweetener/comfyui-WhiteRabbit

Upscale + Interpolate

I recommend using this workflow instead of upscaling with the generating workflows, since you never really know what kind of results you get, ending up upscaling a bad video and wasting time. I included toggles so you can't use multiple interpolation or upscale nodes at once by mistake.

This includes:

WAN Facedetailer

use any WAN 2.2 T2V low model + the following T2V LoRA:

lower resolution from 768 to 512 if you have VRAM issues

Put the following file into "ComfyUI\models\ultralytics\bbox":

WAN Refiner (massive VRAM cost)

increase denoise if you want more inpainting

Sharpen, Gamma, Brightness and Contrast control

Frame clipper (remove unwanted frames at the start and/or end)

GIMM VFI + RIFE VFI interpolation (I recommend GIMM VFI, much higher quality but also much slower)

Tensorrt Upscale + Basic Video Upscale

both use basic image upscaling models

Tensorrt (faster than Basic Video Upscale) with AnimeSharp4x is recommended for anime

RTX Video Super Resolution Upscale

insanely fast

decent quality

FlashSVR + SeedVR2

experimental

Video upscale models that are more intricate than basic image upscaling models

haven't had great results for anime yet

takes a LOT longer

Saving last frame for manual extensions

mmaudio

added Audio combine node

combine audio from an existing video with the generated audio on top

generate nsfw audio with the nsfw model and then combine that video with another generated audio track from the base model for background noises

removed interpolation for easier and faster audio generation - you have the following options:

upload raw unupscaled video to MMAudio Video node and upscaled video to Combine video node

upload upscaled video to both nodes but lower custom_width and custom_height of the MMAudio video node to about half for faster generation and to prevent VRAM issues

upload raw video to both nodes and upscale afterwards

Inspired by https://civarchive.com/models/2137833

Following resources necessary (ComfyUI\models\mmaudio):

https://huggingface.co/Kijai/MMAudio_safetensors/resolve/main/mmaudio_synchformer_fp16.safetensors

Description

This workflow uses a custom sampler approach with base WAN 2.2 Models and the Painteri2v node. Generally this will result in higher quality than premade checkpoints with baked in LoRas but it needs a lot more tinkering with LoRa + their strength values.

I do use both the Lightning 2.2 and 2.1 LoRas together to fix the slow motion of the base 2.2 LoRas, the Lightning LoRa settings are already baked into the workflow. Following Lightning LoRas are needed:

This seems to result in better quality than the newer lightning LoRas but I haven't invested as much time in finetuning them.

One caveat with the Painteri2v node is that it REALLY likes to move the camera around in unpredictable ways, prompting "The camera doesn't move." or similar things helps to stabilize the scene depending on what you want to generate.