DreamShaper - V∞!

Please check out my other base models, including SDXL ones!

Check the version description below (bottom right) for more info and add a ❤️ to receive future updates.

Do you like what I do? Consider supporting me on Patreon 🅿️ to get exclusive tips and tutorials, or feel free to buy me a coffee ☕

🎟️ Commissions on Ko-Fi

Join my Discord Server

For LCM read the version description.

Available on the following websites with GPU acceleration:

Live demo available on HuggingFace (CPU is slow but free).

New Negative Embedding for this: Bad Dream.

Message from the author

Hello hello, my fellow AI Art lovers. Version 8 just released. Did you like the cover with the ∞ symbol? This version holds a special meaning for me.

DreamShaper started as a model to have an alternative to MidJourney in the open source world. I didn't like how MJ was handled back when I started and how closed it was and still is, as well as the lack of freedom it gives to users compared to SD. Look at all the tools we have now from TIs to LoRA, from ControlNet to Latent Couple. We can do anything. The purpose of DreamShaper has always been to make "a better Stable Diffusion", a model capable of doing everything on its own, to weave dreams.

With SDXL (and, of course, DreamShaper XL 😉) just released, I think the "swiss knife" type of model is closer then ever. That model architecture is big and heavy enough to accomplish that the pretty easily. But what about all the resources built on top of SD1.5? Or all the users that don't have 10GB of vram? It might just be a bit too early to let go of DreamShaper.

Not before one. Last. Push.

And here it is, I hope you enjoy. And thank you for all the support you've given me in the recent months.

PS: the primary goal is still towards art and illustrations. Being good at everything comes second.

Suggested settings:

- I had CLIP skip 2 on some pics, the model works with that too.

- I have ENSD set to 31337, in case you need to reproduce some results, but it doesn't guarantee it.

- All of them had highres.fix or img2img at higher resolution. Some even have ADetailer. Careful with that tho, as it tends to make all faces look the same.

- I don't use "restore faces".

For old versions:

- Versions >4 require no LoRA for anime style. For version 3 I suggest to use one of these LoRA networks at 0.35 weight:

-- https://civarchive.com/models/4219 (the girls with glasses or if it says wanostyle)

-- https://huggingface.co/closertodeath/dpepmkmp/blob/main/last.pt (if it says mksk style)

-- https://civarchive.com/models/4982/anime-screencap-style-lora (not used for any example but works great).

LCM

Being a distilled model it has lower quality compared to the base one. However it's MUCH faster and perfect for video and real time applications.

Use it with 5-15 steps, ~2 cfg. IT WORKS ONLY WITH LCM SAMPLER (as of December 2023, Auto1111 requires an external plugin for it).

Comparison with V7 LCM https://civarchive.com/posts/951513

NOTES

Version 8 focuses on improving what V7 started. Might be harder to do photorealism compared to realism focused models, as it might be hard to do anime compared to anime focused models, but it can do both pretty well if you're skilled enough. Check the examples!

Version 7 improves lora support, NSFW and realism. If you're interested in "absolute" realism, try AbsoluteReality.

Version 6 adds more lora support and more style in general. It should also be better at generating directly at 1024 height (but be careful with it). 6.x are all improvements.

Version 5 is the best at photorealism and has noise offset.

Version 4 is much better with anime (can do them with no LoRA) and booru tags. IT might be harder to control if you're used to caption style, so you might still want to use version 3.31.

V4 is also better with eyes at lower resolutions. Overall is like a "fix" of V3 and shouldn't be too much different.

Results of version 3.32 "clip fix" will vary from the examples (produced on 3.31, which I personally prefer).

I get no money from any generative service, but you can buy me a coffee.

You should use 3.32 for mixing, so the clip error doesn't spread.

Inpainting models are only for inpaint and outpaint, not txt2img or mixing.

Original v1 description:

After a lot of tests I'm finally releasing my mix model. This started as a model to make good portraits that do not look like cg or photos with heavy filters, but more like actual paintings. The result is a model capable of doing portraits like I wanted, but also great backgrounds and anime-style characters. Below you can find some suggestions, including LoRA networks to make anime style images.

I hope you'll enjoy it as much as I do.

Official HF repository: https://huggingface.co/Lykon/DreamShaper

Description

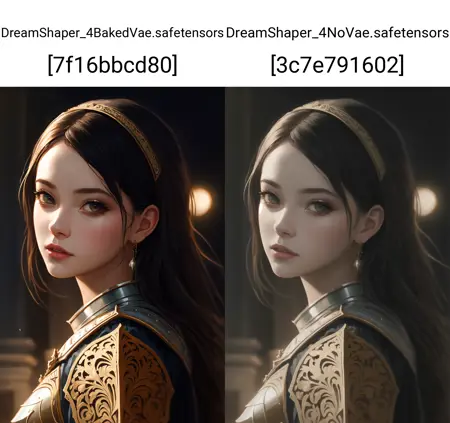

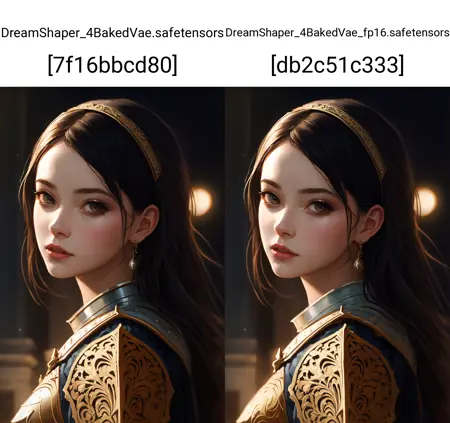

The most ideal for training (small and no vae). Tell me in the comments if you need a pruned version of this.

FAQ

Comments (12)

I want to train my own checkpoint model. I have about 10,000 high-quality pictures. How can I do it? Does anyone know? If you provide relevant technical documents or solutions, I will be very grateful

there are many ways to finetune. I don't have a specific tutorial but it should be easy to fine online.

@Lykon I have used dreambooth, but it seems to keep failing when my training set exceeds 300 images, I am not sure if dreambooth can train with a large amount of content, the tutorial training sets on the web are all very small and cannot meet my needs

These are the tutorials I used:

The official page of kohya_ss - https://github.com/bmaltais/kohya_ss/blob/master/train_db_README.md

Install video on YouTube - https://www.youtube.com/watch?v=9MT1n97ITaE

What the settings mean - https://rentry.org/59xed3#clip-skip

Between these 3 I've been able to train a LoRA to mostly do what I want, but I'm only using 143 images in my training set, and I still haven't cleanup up the auto-generated captions or created regularization images.

@ClassicalSalamander Let me try,thanks

@huangdeyin I hope it has worked for you. I have now trained two different LoRA on 1000+ image datasets with pretty good results, all using the information I learned in the three tutorials I linked in my first response.

Please let me know if you've found any other tutorials useful, I am always looking to improve. Thanks!

Using the latest 'dreamshaper_4BakedVae.safetensors'. I really want to like this Model but I can't seem to get good results. Can anyone get Booru tags working? Doesn't for me.

were you able to reproduce the examples?

I am pretty much, although some result look really overbaked and i'm not sure why.

I was hoping this model would be good with some anime / booru tags but I can't get them working.

@tonyg3d152 hop on civitai discord and tag me in the #help channel

The best model i used!

Your model is awesome!

Best model I used, amazing stability on different species and LoRAs!

Details

Files

dreamshaper_4NoVaeFp16.safetensors

Mirrors

4384_dreamshaper_4NoVaeFp16.safetensors

dreamshaper_4NoVaeFp16.safetensors

dreamshaper_4NoVaeFp16.safetensors

DreamShaper_4NoVae_fp16.safetensors

DreamShaper_4NoVae_fp16.safetensors

dreamshaper_4NoVaeFp16.safetensors

DreamShaper_4NoVae_fp16.safetensors

DreamShaper_4NoVae_fp16.safetensors

DreamShaper_4NoVae_fp16.safetensors

DreamShaper_4NoVae_fp16.safetensors

49_dreamshaper_4NoVaeFp16.safetensors

DreamShaper_4NoVae_fp16.safetensors

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.