Description:

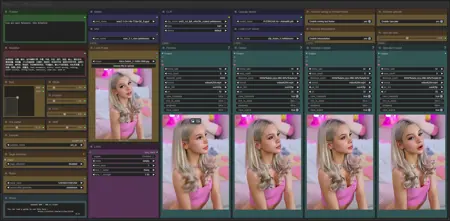

This workflow allows you to generate video from a base image and a text.

You will find a step-by-step guide to using this workflow here: link

My other workflows for WAN: link

Resources you need:

📂Files :

For base version

I2V Model : 480p or 720p

In models/diffusion_models

For GGUF version

I2V Quant Model :

- 720p : Q8, Q5, Q3

- 480p : Q8, Q5, Q3

In models/unet

Common files :

CLIP: umt5_xxl_fp8_e4m3fn_scaled.safetensors

in models/clip

CLIP-VISION: clip_vision_h.safetensors

in models/clip_vision

VAE: wan_2.1_vae.safetensors

in models/vae

Speed LoRA: 480p, 720p

in models/loras

ANY upscale model:

Realistic : RealESRGAN_x4plus.pth

Anime : RealESRGAN_x4plus_anime_6B.pth

in models/upscale_models

📦Custom Nodes :

Description

Big increase in stability,

added notification at the end of the queue,

new lora loader.

FAQ

Comments (38)

What's different in version 6?

Everything is written in "about this version":

Big increase in stability,

added notification at the end of the queue,

new Lora loader.

More specifically, it's the "layer skip" node that's causing major stability issues; it's therefore disabled by default.

I've set up a Lora stack to accommodate as many Loras as you want.

A notification is now sent at the end of the queue.

This an issue thats just popped up, I cant set the size and steps

@Devilday666 The mxTool node seems to have loaded incorrectly, sometimes closing the workflow and reloading it is enough to fix this problem.

@UmeAiRT Yeah not working dunno what's gone wrong it was working before

@Devilday666 I did a fresh install today and I didn't have any problems with mxtool. I don't know why it's buggy for some people.

when i try enabling age attention I a get a weird error "returned non-zero exit status 2"

Have you followed this guide? https://civitai.com/articles/12848

@UmeAiRT Yes I Followed that to the letter and this is the full error

Command '['C:\\Program Files\\Microsoft Visual Studio\\2022\\Community\\VC\\Tools\\MSVC\\14.16.27023\\bin\\HostX64\\x64\\cl.exe', 'C:\\Users\\acgam\\AppData\\Local\\Temp\\tmp57kitw94\\cuda_utils.c', '/nologo', '/O2', '/LD', '/wd4819', '/ID:\\AI Vids\\ComfyUI_windows_portable\\python_embeded\\Lib\\site-packages\\triton\\backends\\nvidia\\include', '/IC:\\Program Files\\NVIDIA GPU Computing Toolkit\\CUDA\\v12.8\\include', '/IC:\\Users\\acgam\\AppData\\Local\\Temp\\tmp57kitw94', '/ID:\\AI Vids\\ComfyUI_windows_portable\\python_embeded\\Include', '/IC:\\Program Files (x86)\\Microsoft Visual Studio\\2022\\BuildTools\\VC\\Tools\\MSVC\\14.43.34808\\include', '/IC:\\Program Files (x86)\\Windows Kits\\10\\Include\\10.0.26100.0\\shared', '/IC:\\Program Files (x86)\\Windows Kits\\10\\Include\\10.0.26100.0\\ucrt', '/IC:\\Program Files (x86)\\Windows Kits\\10\\Include\\10.0.26100.0\\um', '/FoC:\\Users\\acgam\\AppData\\Local\\Temp\\tmp57kitw94\\cuda_utils.cp312-win_amd64.obj', '/link', '/LIBPATH:D:\\AI Vids\\ComfyUI_windows_portable\\python_embeded\\Lib\\site-packages\\triton\\backends\\nvidia\\lib', '/LIBPATH:C:\\Program Files\\NVIDIA GPU Computing Toolkit\\CUDA\\v12.8\\lib\\x64', '/LIBPATH:D:\\AI Vids\\ComfyUI_windows_portable\\python_embeded\\libs', '/LIBPATH:C:\\Program Files (x86)\\Microsoft Visual Studio\\2022\\BuildTools\\VC\\Tools\\MSVC\\14.43.34808\\lib\\x64', '/LIBPATH:C:\\Program Files (x86)\\Windows Kits\\10\\Lib\\10.0.26100.0\\ucrt\\x64', '/LIBPATH:C:\\Program Files (x86)\\Windows Kits\\10\\Lib\\10.0.26100.0\\um\\x64', 'cuda.lib', '/OUT:C:\\Users\\acgam\\AppData\\Local\\Temp\\tmp57kitw94\\cuda_utils.cp312-win_amd64.pyd', '/IMPLIB:C:\\Users\\acgam\\AppData\\Local\\Temp\\tmp57kitw94\\cuda_utils.cp312-win_amd64.lib', '/PDB:C:\\Users\\acgam\\AppData\\Local\\Temp\\tmp57kitw94\\cuda_utils.cp312-win_amd64.pdb']' returned non-zero exit status 2.

@Strikefreedom213 This is strange because I have exactly this error when I do not copy the files into python or when I am missing VS Tools.

@UmeAiRT that's what i don't understand. Do in need to install python separately and put files there or is there something i'm missing. Like I'm using windows 11 not sure if that matters. But do i need to install the triton files in both embedded and natively on my computer? I've triple checked my Microsoft VS tool and i have everything installed.

@Strikefreedom213 It is recommended to install Python even though we use the one embedded in comfyui_portable. Here I give you the missing files directly, but normally you would have to retrieve them yourself from the native folder to put them in the folder of the embedded version. In theory, in your error message it gives the path of each file it needs, and you would have to check them one by one to know what is missing.

@UmeAiRT figured it out i had to do a complete uninstall and reinstall of visual studios

After trying a few others, this was the first video workflow I've been able to get working successfully thanks to your clear instructions / guide and requirements, linking all resources needed. I'm off the ground thanks to you!

Yeah, me too. I only use UmeAiRt's T2V and I2V workflows for my Wan work too. Works well for me and I appreciate the constant updates. I have tried others but many give me issues so not worth it.

Thanks for your feedback. I'm trying to find a balance between ease of use and functionality, but it's getting complicated, so I've written the various guides.

My Generations keep getting stuck at "encode Negative" im not sure why but all progress just freezes no matter how long I wait.

Are you not getting any messages in the ComfyUI console? Does your computer seem to be working or just waiting?

@UmeAiRT It was just waiting, oddly I tried installing your windows portable install and that one is currently working past the encode negative. I'm not sure what the issue is on my base Comfy however, no error message ever appeared.

It is the very best: both Tea and Sage, and the 2 step approach makes fast and high quality videos!

The only missing thing in my opinion is a prompt creator, like Florence, or like interrogate clip...

You're an AI community treasure, great, uncomplicated flows. Appreciated!

Hi again. This workflow is still great, but I really miss some power over the story of the video. Could it be possible to add some kind of prompt scheduling to Wan 2.1? :O

Or could you maybe do a Hunyuan clone of the workflow? Seems there are more LoRA's to get there, but it is f***ing pain to set up (for me at least). Thanks again!

The workflow works well. But I can't see the sliders in the mxslider node (frame, steps, etc). Could you please help me?

mxtools seems to be buggy with certain updates. I did a new installation with the latest updates and I can't reproduce this bug.

Found the problem, Delete Mix Lab folder

@UmeAiRT Thank you very much problem solved

@UmeAiRT thank you very much. Problem solved

works great and fast, except all the videos have pretty bad non-linear color banding. Does anyone know how to fix/prevent this?

Finally a workflow that works as advertised. Thanks for the frequent updates!

Does increasing the "frames" parameter require more VRAM? If so, what's the best way to extend the duration of generation without sacrificing temporal coherence?

Yes, the more frames the video has, the more VRAM it consumes. There are tools to maintain better consistency, but I'm still working on it.

UmeAiRT I'm having ComfyUI accessing the windows location services while running version 1.6

Any clue what node could be doing that? There's no reason why a ComfyUI node would need to know where I am

I haven't put anything localization related on 1.6, only a notification module

@UmeAiRT Might not be specific to 1.6, as I have not looked into the previous version but is the version I am using currently. It is very likely that prior versions have a node requesting location data

@thisisarandomaccount2025 I must admit that this is not intentional. We would have to see which node is making this request because I don't see where it could be coming from.

@UmeAiRT Oh sorry yeah I didn't mean to imply that you did this intencionally. But one of the nodes is doing it, it seems. For now I just disabled Window's location services

this is a great workflow. Is it possible to do video to video with masking in WAN?

I need to do some research to see if it's possible, I don't know at the moment.