Description:

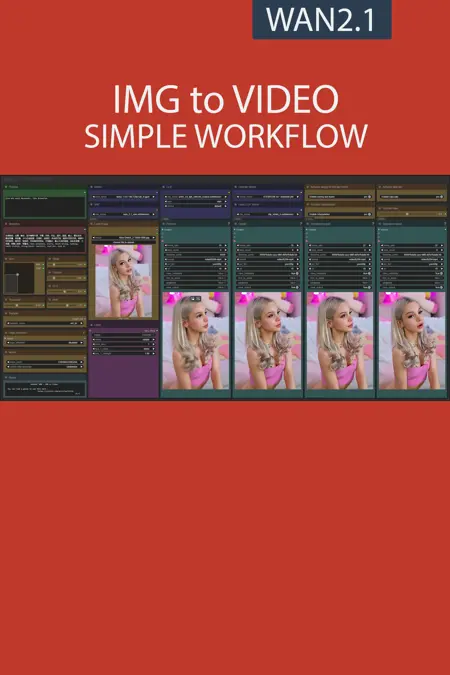

This workflow allows you to generate video from a base image and a text.

You will find a step-by-step guide to using this workflow here: link

My other workflows for WAN: link

Resources you need:

📂Files :

For base version

I2V Model : 480p or 720p

In models/diffusion_models

For GGUF version

I2V Quant Model :

- 720p : Q8, Q5, Q3

- 480p : Q8, Q5, Q3

In models/unet

Common files :

CLIP: umt5_xxl_fp8_e4m3fn_scaled.safetensors

in models/clip

CLIP-VISION: clip_vision_h.safetensors

in models/clip_vision

VAE: wan_2.1_vae.safetensors

in models/vae

Speed LoRA: 480p, 720p

in models/loras

ANY upscale model:

Realistic : RealESRGAN_x4plus.pth

Anime : RealESRGAN_x4plus_anime_6B.pth

in models/upscale_models

📦Custom Nodes :

Description

Added user setting for interpolation ratio

Added new auto-prompt feature

Complete patch notes here : link

FAQ

Comments (41)

I have a new issue on V7 When I want to input my own prompt it doesn't allow me but gives me a value box anyway way around this.

I just made a hotfix, can you re-upload the workflow?

I converted your script to a python script so it can be used universally across OS platforms and on servers using Jupyter(like vast.ai)

I can send it over to you if you would like to add it to your description.

You can send it to me by message and it will be provided alongside the Windows version of the script.

This is odd, where the Size, Steps, Frames, etc were supposed to appear sliders and such, for me it's blank, so I can't change anything on this workflow. Anyone knows what could it be?

Missing nodes?

It's a compatibility issue with mixlab node : Sliders not working after update · Issue #28 · Smirnov75/ComfyUI-mxToolkit

To explain simply, the developer of mixlab wrote a method that corrupts other nodes

@UmeAiRT Cool, thanks for the fast response. I will try the fix someone pointed out in that page later. Bless ya!

@UmeAiRT yeah, that did it! Modifying the line 2186 with the one suggested solved the issue. You're the man. Thanks!

The "vae decoder(tiled)" can cause color shift issues in long video generation. Replace it with the normal vae decoder can solve this problem.

vae分块解码节点会导致长视频出现颜色偏移, 换成普通的vae解码节点就好了

Thanks for your feedback, I will try this solution.

After testing, this doesn't fix the problem, quite the opposite. However, what seems to completely fix this problem is using a non-quantized model. But obviously, this is slower and requires more VRAM.

@UmeAiRT Sorry, I may not have made myself clear. What I meant was that when the total number of frames generated exceeds the "temporal size" of "VAE decoder(tiled)", there will be a color shift(or contrast variation) near the frames of temporal points.

As for the color darkening from beginning to end, it seems that we can only avoid it by randomly generating multiple times.

↑ for v1.6. I haven't used v1.7 yet, but I think these issues still exist.

Recently, I have seen some new technologies such as Teacache Retention mode, Cfg Zero Star, Zero Init, etc. Can I expect these optimizations to be added in the new version?

@keyblade I am open to any improvement. I will look into what these features do and how to integrate them into future updates.

Try use 832*480 480*832 512*512 1280*720 720*1280 Resolutions

Do the GGUF versions of wan have the same output quality as the 14b versions?

GGUF versions are like in between the regular model versions

some are "quantisized" from the highhest quality version and are better quality than the lower quality versions then there are those "quantisized" from those lower versions, the best quality is still the 14b version

but depending on your hardware you might want to pick and choose whichever version is best for quality for resource usage/performance your hardware can handle

"quanitsized" GGUF versions are mildly inferior to the versions they based on losing some quality but the resource requirement reduction for only a little bit of quality loss is worth it

I wish I could figure out why, but my outputs from this workflow look faded and weird compared to the basic workflow. Fluids look particularly bad, everything looks smeared with Vaseline compared to a basic workflow. I have tried disabling sage attention by right clicking and selecting bypass in the workflow and doing likewise for the apply teacache box, but I don't think it works because the output is still much faster than a basic workflow and the quality issue remains.

Have been using this workflow. Nice setup of everything. Has anyone figured out a cause or a fix for the weird color / contrast spike at about 2/3 of the way into every clip?

I have the same issue, would love to know how to avoid this ?

Here too, in my case it always starts on frame 56 and lasts a few frames before returning to normal. Maybe it's something related to VAE, but I have no idea how to configure it.

Make shorter videos and merge them has been my fix.

@synalon973 @john281

It's not the same, at least for those of us looking for consistency in movement. But it looks like I already solved the problem, and I can even make beautiful 7-second videos.

You just need to replace the Decode stage nodes with new VAE DECODE nodes

1-Look for the nodes 'Decode stage 1' and 'Decode stage 2'.

2-Create two new vae decode nodes in >Latent> VAE DECODE.

3-Now connect the old Decode stage nodes to these new ones. All inputs and outputs must go to their respective nodes.

4-Delete the old decode stage nodes.

It worked for me.

@EechiZero Wow this actually works and has improved my generations - no more color flickering. If someone can bring this attention to the workflow owner that would be great for all users!

@endnote @EechiZero This is strange because I have already tested this solution and it made my videos very unstable. Maybe an update. Are you talking about the VAE node decoded by default?

my result with default VAE decode : https://snipboard.io/HxUpBJ.jpg ^^'

@EechiZero @UmeAiRT @UmeAiRT vae decode switch worked for me. make sure the nodes are all connected the same as before. isn't the tiled decode just a memory saver? I am on a 4090.

Hi, I'm really enjoying this workflow, it's so clean and cool!

Also, you have written a guide on workflow, so I can read it and grow my basic knowledge, thank you very much.

I have a question. You said that the shift level determines the movement speed of the result. If I lower the level, it gets slower, and if I raise it, it gets faster, right?

The slider can only go up to a maximum shift level of 10, is it because going higher is not recommended?

Usually people want to reduce the speed, but if you want to go higher just double click on the number and edit directly with any values

Thx! I have a question, where do you control the lenght? noob me can't find it I'd like 201 length for a full loop

Duration slider is the length.

@synalon973 I don't find it I only see a "frames" slider with 120x frames max

@Kanonno88 Are you using the simple workflow?

If you are using the simple workflow it is the yellow duration node. Directly below the green Positive prompts node.

If you are using the complete workflow it is the yellow frames node, 2 nodes down from the red Negative prompts node.

I don't recommend going much beyond 64-80 frames in one go as trying to large a change to quickly gives me some bad results, I get better results making 2 64 frames videos and merging them.

@synalon973 I switched to the simple one, thx ^-^

why can't i adjust the camera angle, everything is fixed angle, even though i tried every prompt?

I'm not an expert on dynamic movement prompts. You'd have to look for articles from community members who have that expertise.

If its image to image it can take a lot of frames to gradually change the camera angle from the original images postition.

I am curious to know why this workflow has an empty clip encoder, what does it do, what purpose does it serve? i like to pick things apart see how it all works to get a better understanding on how it all functions

It will disappear in the new versions, it was linked to a bug in the display of the "positive" node when it is not directly connected to a node of this type.

i see thanks