Description:

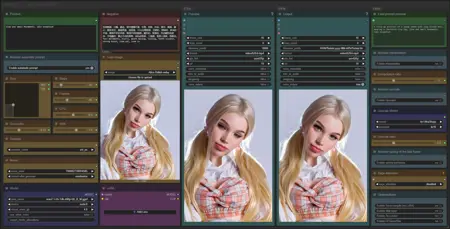

This workflow allows you to generate video from a base image and a text.

You will find a step-by-step guide to using this workflow here: link

My other workflows for WAN: link

Resources you need:

📂Files :

For base version

I2V Model : 480p or 720p

In models/diffusion_models

For GGUF version

I2V Quant Model :

- 720p : Q8, Q5, Q3

- 480p : Q8, Q5, Q3

In models/unet

Common files :

CLIP: umt5_xxl_fp8_e4m3fn_scaled.safetensors

in models/clip

CLIP-VISION: clip_vision_h.safetensors

in models/clip_vision

VAE: wan_2.1_vae.safetensors

in models/vae

Speed LoRA: 480p, 720p

in models/loras

ANY upscale model:

Realistic : RealESRGAN_x4plus.pth

Anime : RealESRGAN_x4plus_anime_6B.pth

in models/upscale_models

📦Custom Nodes :

Description

Complete patchnote : link

What's new? :

Added non-GGUF version and without nightly node,

New interface,

New upscaler,

New model optimisation,

New LoRA loader,

New model loader to unload part to RAM.

FAQ

Comments (139)

It seems like in version 1.8, using a method with 3 sampling steps resulted in characters' eyes having less flickering/fewer artifacts. Maybe it's just my perception. Is the reduction in sampling steps in the newer versions primarily aimed at saving time?

看起来像1.8版本,3次采样的方法人物的眼睛没有那么多闪烁,可能是我的错觉,最新版本减少一次的采样是为了节省时间吗?

I noticed the eye thing too

@DoctorAculaMD The eye flickering looks like it's caused by upscaling; this issue only appears when upscaling is applied.

hello, i tried following each step but i still have cfgzerostar, upscalertensorrt, loadupscalertensorrtmodel as missing nodes

For cfgzerostar you need last nightly version of comfyUI.

For upscalertensorrt, loadupscalertensorrtmodel you need ComfyUI-Upscaler-Tensorrt on the patchnote you have the solution to repair this node if it not loading : Workflow patch notes : IMG to VIDEO 2.0 | Civitai

The new "Upscaler TensorRT" node sometimes does not install correctly.

You must therefore install a specific python module:

.\python.exe -s -m pip install wheel-stubIf you have already installed the node you can then install the prerequisites from the node folder:

..\..\..\python_embeded\python.exe -s -m pip install -r requirements.txtIn 2.0 you have a "compatibility" version without these two nodes

Am having the same issue, I sorted the CFGZeroStar by just deleting the ComfyUI-KJNodes Folder and using Githubs URL to redownload it via ComfyUI but its not working when I do it with Upscalertensorrt

@UmeAiRT Do I run these commands from the Tensorrt folder in custom nodes?

@UmeAiRT ty ty i fixed the upscaler with pythom commands

@synalon973 you use the python embedded folder

@mrshazam Thank you.

@Devilday666 thanks that worked for me too!

Perfect, easy and functional. No problem of installation and execution. I wait for a guide updated to the latest version. Thanks for the excellent job

The guide is aready updated😉 : Step-by-Step Guide Series: ComfyUI - IMG to VIDEO | Civitai

It says

Failed to find the following ComfyRegistry list. The cache may be outdated, or the nodes may have been removed from ComfyRegistry.

comfy-core

reinstalled comfi via a new installer, everything works. Thanks for the work done.

Can you help me figure out the parameter virtual_vram_gb - 4? What does this cipher 4 mean? Is this how much will be unloaded into RAM or vice versa? I have a video card with 12 GB of memory and I use the Q4_K_S and Q5_K_S model, how much should I set this parameter?

i want to ask too, my vram is 8gb

I feel as if I lost a lot of performance when upgrading for the new version, even on the old workflows - and the Encode Positive step seems to really hit my CPU now.

Works great, when it works. Quite a bit quicker than version 1.8, however, It rarely runs without throwing me an OOM on my 12 gb 3080 when I never had this issue on 1.8

Hi,I can't find the missing nodes. They can't be installed via the manager, and they're not available via search:

CFGZeroStarAndInit

UnetLoaderGGUFDisTorchMultiGPU

although, when I click "install missing nodes" again, it shows me

ComfyUI-KJNodes and ComfyUI-MultiGPU (which are installed)

Wan2.1 IMG to Video 2.0 (base-C) - only this version runs

@HornyWorld NVM I think I figured out what was happenning.. delete comfyui-mxtoolkit and comfyui-kjnodes from your custom nodes_folder then reinstall both using (location of your custom nodes folder):

git clone https://github.com/kijai/ComfyUI-KJNodes /ComfyUI/custom_nodes/ComfyUI-KJNodes

git clone https://github.com/Smirnov75/ComfyUI-mxToolkit /ComfyUI/custom_nodes/ComfyUI-mxToolkit

@dxjaymz Great! It helped. Now even the sliders work! Thank you very much

@_VI_ I had this error alot also! I believe there's some problem with the folder structure (lowercase/uppercase) in the original folders they are lowercase. But I believe the nodes look for the files in the uppercase/mixed format, so a fresh reinstall fixes that. I also believe the ComfyUI dont check and if you already have the node installed it doesnt fix this.

@dxjaymz I installed everything through the manager (missing mods)

I cannot get it to work, cloning the custom nodes directly from git did not help. I have missing "UnetLoaderGGUFDisTorchMultiGPU" the whole time..

@sl1d123123301 this is another node, to fix this one try to update you ComfyUI to the nightly version then trying to update from missing nodes from the GUI.. if not you can try to use the same command I shared here but with the url https://github.com/pollockjj/ComfyUI-MultiGPU

@dxjaymz thanks for your quick reply, i did try cloning multi gpu from the reposiotry but its not working. I am trying the Wan2.1 IMG to Video 2.0 (gguf) version btw. I now saw that you have written "Wan2.1 IMG to Video 2.0 (base-C) - only this version runs" does this version is compatible with the gguf model?

@sl1d123123301 That was not my message (he was refering that only the simplefied workflow worked for him) I have the full one working for me, but I also had some trouble (running on ubuntu) Im also running the model Wan2.1 IMG to Video 2.0 (gguf)

@dxjaymz what did you do to run it on Ubuntu? Ji have Ubuntu as well

@sl1d123123301 I rented a machine with ComfyUI already installed, I only had to add the nodes and configure, I used vast.ai

2.0 is making my video frames start at normal brightness and then immediately darken. I'm not getting this problem in 1.8.1 - any idea what's wrong?

Otherwise, 2.0 is quite a bit faster than 1.8.1. It would be amazing if there was a way to fix this brightness problem.

I think I fixed my brightness problems. I had to switch from ComfyUI nightly to stable and restart. I'm having problems getting CFGZeroStar, but I'll probably need to manually install that or just leave it off.

Hey i've seen the guide too but i just need an info, it works and all but "Select your model and set virtual VRAM ", the ammount i need to select it's to compensate the vram ? an example i have 11 gb vram, if i set that value to 10, it will use all the gpu vram + 10 GB of ram ?

Thats the theory anyway; Depends on the model; and I'm not sure its 100% accurate; On 11 gb card, I was using the 16 gb Q8_0 models and input 15.5 as sometimes lower numbers didn't seem to offload as much as needed; however it also seems to chose automatically (at least if you chose a higher number than needed)

@rtveate011105194 thank you :D

What is this virtual VRam supposed to set to ? Not sure what is optimal

Version 2.0 is giving really wonky results... yikes... I am going to stick with older version that works beautifully.

can i see some samples of your work?

like what versions please show

Upscaling doesn't work (isn't connected to anything)

Failed to validate prompt for output 159: * (prompt): - Required input is missing: anything * easy cleanGpuUsed 159: - Required input is missing: anything Output will be ignored Failed to validate prompt for output 81: Output will be ignoredTurns out you have to have interpolation on to upscale

Heyy sorry for the noob question. I'm still new and still in the learning stage. But I have a i9 10900k cpu . Iv got 64gb ram. And a RTX 4080 gpu. I'm some what finally managing to get outputs . But I was asking if I was able to get this image clearer https://civitai.com/images/68838665 do I upload the upscale video . The interpol or what ever it's called haha. Again so sorry for the noob question.

can try lower tea cache value or increase resolution if input image before running?

缺少節點類型

載入圖表時,未找到以下節點類型

CFGZeroStarAndInit

CFGZeroStar

更新過還不行了 怎麼解決呢 wow

I have this problem too

@fishGO and @jelly19820921 , if you have this problem and you 100% sure that you have updated all, in ComfyUI manager ( top right corner, but if you dont have it, you must install, its very useful) press "Switch ComfyUI" and select "nightly" version, so its probably you have 0.3.27 now. After switch restart ComfyUI and problem is gone. But even without CFGZeroStar everything works and generates, but you simply won't be able to use this optional node

Me too, and I updated everything for nightly etc. I FIXED see how below

delete comfyui-mxtoolkit and comfyui-kjnodes from your custom_nodes folder then reinstall both using:

git clone https://github.com/kijai/ComfyUI-KJNodes /ComfyUI/custom_nodes/ComfyUI-KJNodes

git clone https://github.com/Smirnov75/ComfyUI-mxToolkit /ComfyUI/custom_nodes/ComfyUI-mxToolkit

Theres a mismatch in folder names in lowercase and upercase letters, doing what I said will fix it!

Maybe you can also do this uninstalling both from your ConfyUI and after updating to last nightly trying to install it again using the same GUI. But I did not try this second option.

@Sleepwalking Thanks for the info, after doing a update through comfyUI manager and not through the bat update worked. Couldn't switch to the nightly version but could switch to version 0.3.28 after restarting didn't receive any messages.

@dxjaymz it works for me, ty

Interpolated and upscaled results aren't being generated and saved even when turned on.

I suspect there's something wrong in the connection or configuration of the nodes, since they also seem to try to point to a hard-coded path (F:\AI\dev\WAN2.1 V2\ComfyUI_windows_portable\ComfyUI\output\) instead of a relative one (In my case, C:\Users\User\Desktop\ComfyUI\output)

Weirdly this doesn't happen if you expand the "Save Interpolated" and "Save Upscaled" nodes

Hi friend, I'm very happy with your work, I would like to know if you can integrate wavespeed?

Thank you t works perfectly. The only issue I faced is when I activate Sage, despite its installed and used in some other workflows, neither option of the listed 3 seems to work for me. 2 CUDAs and the Triton one.

I got error in this form

cannot import name 'sageattn_qk_int8_pv_fp16_cuda' from 'sageattention' (/home/therealdude/pytorch-env/lib/python3.12/site-packages/sageattention/__init__.py) is:issue

cannot import name 'sageattn_qk_int8_pv_fp8_cuda' from 'sageattention' (/home/therealdude/pytorch-env/lib/python3.12/site-packages/sageattention/__init__.py)

cannot import name 'sageattn_qk_int8_pv_fp16_cuda' from 'sageattention' (/home/therealdude/pytorch-env/lib/python3.12/site-packages/sageattention/__init__.py)

https://civitai.com/articles/12848 i would redo this walkthrough and make sure you are grabbing the right cuda toolkit version and other versions -- you should atleast be able to get triton to work after this walkthrough

Hi friend, your work is great. I would like to know if you have any plans to add a start image and an end image to the i2v workflow, to improve image generation?

tensrrtupscaler nodes not found, even after install, even after install from github

You have the solution here : https://civitai.com/articles/13386#known-issue-:-kt2dsorjm

@UmeAiRT 👍ok it is working now

@UmeAiRT I can not seem to move the sliders for steps, frames, cfg etc.... and Tea cache is not connected please advise?

Can you use 2.1 lora on 14b?

I already installed the ComfyUI-Upscaler-Tensorrt, but it keep saying that was missing?

also i already did the pip install -r requirements.txt in that upscaler-tensorrt folder.

Install ComfyUI Manager and use it to check for missing nodes. If installed, use it to update the non-functional node to the latest nightly.

@stephenholli894 yeah i used the comfyui manager to install the missing node, its installed already with the latest nightly version. but it keep saying the node is missing. not sure what is the problem. any idea?

@DarkAmbassador Your problem and its solution are explained here: https://civitai.com/articles/13386#known-issue-:-kt2dsorjm

You have to install wheel-stub on your python before installing TensorRT

@UmeAiRT woah it solved my problem, thanks :D

I had everything working before, but had to put everything back on, now I get an error :

* WanVideoTeaCacheKJ 125:

- Required input is missing: rel_l1_thresh

* ModelSamplingSD3 160:

- Required input is missing: shift

node has a point for rel_l1_thresh node

Does anyone know how to solve the problem?

It looks like your optimization nodes are no longer connected to the sliders (teacache slider and shift slider). Can you just redownload the workflow to see if it fix it?

fixing the upscaler issue lead to this error:

IMPORT FAILED ↗

ComfyUI-Easy-Use

i've tried different version, install via pip / manager, won't work.

updated comfyui and python dependencies.

ideas?

ComfyUI manager issue, it does it all the time. Download and update the custom node manually.

Thank you for including the compatibility versions!

Amazing work mate.

Doing some testing with random LoRAs and its working amazingly well. Uploading one or two so far.

What's the diff between the base and gguf jsons?

I've chucked you a few thousand buzz for the work. You are a true legend.

can you show me your link

if you add patch_order node, torch.compile would working well with any loras. can you make it?

This v2.0 workflow works great on Kaggle with dual T4 GPU's (I’ve tested it myself).

However, it would be better if the upscaler had its own separate workflow since i can’t generate long-frame videos without running into system RAM OOM issues (with the upscaler enabled, of course).

@UmeAiRT Oh sorry I didn't check, I thought this one wasn't made yet but it was already there a week ago

and also thank you!

Where do I get all the custom nodes?

Did you heard about OptimalSteps ??

https://github.com/bebebe666/OptimalSteps

Can you try include this to your amazing workflow ?

Yes,please support this project.

Can anybody think of why I might be getting just a bunch of colorful static?

Please help... I'm desperate. Make it make sense! I have a 4070, and 64GB of RAM. I thought this would be enough, but I'm stuck in Sampler Stage 1, my GPU is maxed out at 100% and VRAM at 97% on COMFYUI. I tried downgrading from Q6 model to Q4, but nothing! Can't generate anything. Are there some settings for me to change? What's going on with my setup? By the way, I checked my system performance, and while it is stuck I have 34% of VRAM usage and GPU is at 20%. I also get a torch.cuda.OutOfMemoryError: Allocation on device upon first attempt, then when I run it again it just gets stuck.

What resolution are you generating at? Some suggestions:

- Try generating at a lower resolution: 480 width x 720 height

- Try generating fewer frames. Start w/ 40, and go up from there. From what I understand, all frames need to fit into VRAM simultaneously, so if you try to generate too many frames, you'll get an OOM (out of memory).

Are you using the 2.0 or 1.8.1 version of the workflow? For me, the 2.0 sits at the Sampler stages for literal hours. Which is odd since I've used my own and others Wan 2.1 workflows and never had generations take that long. I went back to the 1.8.1 version of the workflow, takes 1500 seconds for the enter workflow. No idea why the 2.0 takes so long, but you may want to try an earlier version or another workflow since that should work. I have a 4060 with 8 GB of VRAM, I'm running Q6, 20 steps, 81 frames, 416x608 resolution, Sage Auto, Tea cache .13. Your 12 GB of VRAM should do better than my 8. I have added these flags to my ComfyUI shortcut as well --lowvram --t5_cpu --offload_model True

I have the same configuration of a 4070 12GB PC and 64 RAM, I generate with a maximum resolution of up to 768x544 30 steps, I use the standard model from wan2.1 i2v 480p 14b bf16.

768x544 takes a long time to generate, so I mostly use 720x480 or 360x480, and I also use interpolation to get a better picture.

if you use gguf, then the model is q4 k-s(m) or q3 k-s(m)

I haven't really figured it all out yet, but this is what works for me 100%.%

prolly the resolution is too big, it runs on 720 Q4 on my 3080 10gb, so you should fly

It looks like an update to the node used for the positive prompt broke the workflows. On a side note, thanks for creating these!

Mine also does not take in any positive prompt.

Same here. The positive prompt is ignored.

The latest ComfyUI update broke quite a few nodes, it takes a while for everything to update.

@UmeAiRT indeed it did a catastrophy, I had everything on linux and some basics installed on windows, switched to windows started fixing eveything as in windows even on the last update things seeems better, but now im blocked by Florence 2 node now working

@etekoo455 rolling back comfyui to any earlier release doesn't seem to fix the positive prompt issue

@UmeAiRT Hi! How much time is for this? 🥲

Yeah, I had to grab a different positive node type and rewire it a bit to get it working for me. I'm now using the "Easy-Use" positive node. I think you can get their custom nodes in the Manager or here: https://github.com/yolain/ComfyUI-Easy-Use

I just dragged the "Positive" node directly to "Encode positive", seems to work, it's a temporary fix for now...

@Eliz103 Fixed ^^

Hello again. =)

It so happened that I had to upgrade from 3060 to 5070ti. The latest card has Cuda version 12.9. I had to completely change the approach to ComfyUi and other software. Most of the problems were solved, but several appeared again in this Workflow:

Missing Node Types:

- WanImageToVideo

- CFGZeroStar

- UpscalerTensorrt

- LoadUpscalerTensorrtModel

I tried to install all the nodes manually and through the manager, but these 4 always remain

It seems like after a day I managed to run the model. The biggest problem was in the Upscaler.

However, for some reason my 3060 generated an image faster than the 5070ti, which is several times more powerful than the 3060. How is that?

@_VI_ You got me worried, I plan to do exactly the same upgrade in the next few days and I thought that by now the software stack should be more or less ready for blackwell cards

@LezardNoire Thank you very much, I will wait! I wanted to update to speed up the work, but it turned out quite the opposite)

Previously I could create a +- good video of 5-6 seconds in 35 minutes, now it takes about 3 hours :DD

@_VI_ Same parameters? Usually when it slows down this much it's because vram is lacking and too much data needs to be offloaded to the system memory

@LezardNoire Yes, I prepared it in advance to compare the speed difference between the cards. The same image, the same parameters, except that there were problems with the Cuda cores, but everything was fixed. It generates images much faster than the old card. But the video is the opposite.

@LezardNoire After many hours of suffering, it began to work better and launch. This defies any logic. I reinstalled comfyui, python, custom nodes dozens of times, installed them from the manager and from links from git. I don't even know what exactly helped. However, now only the gguf version works (which was the only one that didn't work on the old map before). Now everything is the other way around. But now at least there is some result. The speed is good. I wouldn't wish my enemy to face what I had to face these past couple of days.

@_VI_ yeah sometimes it's hard to find the magic combination of python, cuda and node versions that work, a few days ago I had to reinstall the OS to a new SSD and I ended up copying the old python environment to the new installation because the one I created from scratch gave me problems.

Besides Wan 2.1 was released just a couple of months ago so it's quite normal that the sw stack isn't very mature. Glad you could make it work!

@LezardNoire Thank you, I'll have nightmares about all this now. Deleted-installed-deleted-restored from the trash = it worked.

@LezardNoire It's a pity that in case of something there is no pattern or logical chain of actions. It's completely random that it started up in the end.

how to install these nodes? I get the same issue

@lily99ww662 It's very hard to explain. It was like luck. I installed it first from the mod manager - then deleted it, then installed it from links, then deleted the links, then installed it from the mod manager, then added it from other links, after several restarts it started miraculously

@lily99ww662 I was at least able to clear the tensorrt node issues by following this lovely person's commands to manually install the necessary pip packages. Still working on the CFGZeroStar issue though.

Thanks for building this workflow. It's pretty awesome.

I'm trying to use it with the General NSFW lora (https://civitai.com/models/1307155). One of the tips there say to edit the blocks that are used for inference (https://imgur.com/a/S05tdk0). Is that the same thing as the Skip Layer setting?

Apologies in advance for the naive question.

help

Failed to find the following ComfyRegistry list. The cache may be outdated, or the nodes may have been removed from ComfyRegistry.

comfyui-kjnodes

comfyui-kjnodes

Thanks UmeAiRt for this incredible workflow! Unfortunately, the 2.0 does not work well for me. But, the 1.8.1 is the best out there right now imho and I feel like I've tried and created dozens of Wan 2.1 workflows. The deal is that 2.0 takes 2-6 hours (sitting in the sampler stages) to generate a 5 second video for me and sometimes gives me errors or crashes. With the same models and settings the 1.8.1 version of the workflow takes 25 minutes and works every time with superb results. Granted, I only have a piddly RTX 4060 with 8 GB of VRAM, but the 1.8.1 version of the workflow does not make me feel the limitation and generates excellent videos.

how to fix

When loading the graph, the following node types were not found

CFGZeroStar

UnetLoaderGGUFDisTorchMultiGPU

CLIPLoaderMultiGPU

I'm getting missing UnetLoaderGGUFDisTorchMultiGPU as well no matter I what I tried to do it never works.

@DrHojo123 UnetLoaderGGUFDisTorchMultiGPU come from ComfyUI-MultiGPU

@San_Andreas seems to fix it, but how do I change the model? When trying to change the UnetLoader it just says undefined.

@DrHojo123 This kind of problem can occur if your ComfyUI frontend is not up to date. The latest updates break my old workflows, but when I update them, they no longer work for older versions.

As of 20/04, primitive positive prompt panel stopped passing the values after last updates applied to nodes. The issue can be fixed by just replacing it with another prompt panel (easyuse in my case). It works but without the primitive feature of course.

подскажи, пожалуйста. скачал kijai модель на 16гб 480p при запуске зависает. какие лучше настройки выставить? я впервые пробую видеогенерацию. видеокарта на 16гб видеопамяти, но шина слабенькая и Cuda ядер маловато

Try a workflow for GGUF and go for lowest quant first, e.g. Q3. It is not guaranteed to work with RTX 20xx and 10xx cards

Regardless of how small or short I try to make the video, it always tops out my vram. I've got a 4090 and this hasn't been an issue on other wan workflows.

Can you currently recommend a WAN workflow thats currently working? They're all busted for me right now. I'm also getting OOM on 4090.

@Minase460 If none of the workflows work for you, it's probably because the problem isn't with the workflow, but with your comfyui installation. I recommend you do a clean install of it or use my script that will do it for you: ComfyUI auto installer WAN2.1 | GGUF | UPSCALE - v2.0 | Wan Video Other | Civitai

@UmeAiRT I have done this already using your auto installer. I used it to make a clean install and install all models. It is still not working. Out of memory error and crashes my comfy. I have tried V1.8 and V1.7. I have tried 12+ workflows now. Either the nodes are broken or out of memory errors.

@Minase460 Which version of the model are you using?

@UmeAiRT The one loaded by default in the workflow is wan2.1-i2v-14b-480p-Q8_0.gguf

RTX 4090 24gb -

Got an OOM, unloading all loaded models.

I have a 3090 with 24gb and it runs out of memory just a few minutes into doing anything. I appreciate the effort and hope there is a fix or at least some suggestions from the community.

Watch VRAM usage, opt for lower quant and/or virtual ram option. Both GGUF at Q8 and the base models push VRAM usage up to the limit even with 24gb. Consider reducing number of frames to 61.

@joesixpaq I sized the image down to 344x512, am literally only doing 8 frames as a test, and cut the batch ssize down to 1. I get that my hardware was the latest and that compared to some of the freaking fast models out there I need to have reasonable expectations, but I've been seeing posts about people doing stuff with way weaker cards. I guess I'm not understanding things yet.

@povgeek Again, what is your VRAM usage before you start ComfyUI? Check your system monitor

Using the features of Comfyui-MultiGPU, running out of memory should never be an issue.

You have something else going on. I can push out 120 frame videos at 480x720 with the upscaler and interpolation turned on. I am using a 12 gb 3080. Try an isolated clean install of comfy-ui.

@S3X_R0B0T Yeah I just made a separate ComfyUI branch with the dedicated installer and have it working now. It's pretty amazing. I was playing around with Framepack and some other models, but the quality on this one is so much better AND it can use LORAs.

I just made a separate ComfyUI branch with the dedicated installer and have it working now. It's pretty amazing. I was playing around with Framepack and some other models, but the quality on this one is so much better AND it can use LORAs. Amazing! Thanks so much!

@povgeek Glad you got it working! Have fun!